SEO Audit Data Export: Why Most Tools Hide Your Results

Most teams pick anseo audit toolthat optimizes reports, not outcomes. The result is a clean PDF and a dirty backlog. Engineers get 200 issues, zero priorities, and no clear lift. SEO audit guides focus on report efficiency (Swydo's agency playbook emphasizes streamlined workflows), but faster reports don't ship fixes. The bottleneck isn't documentation - it's decision-making and engineering allocation.

So what is the bestseo audit toolfor a technical team? It’s the one that converts findings into committed tickets. We built MygomSEO for that job: decision-first audits, scoped to real engineering time.

We’ll show our system for turning SEO data into shipped changes and measurable lift. It’s based on delivery work where engineering hours are the scarcest resource.

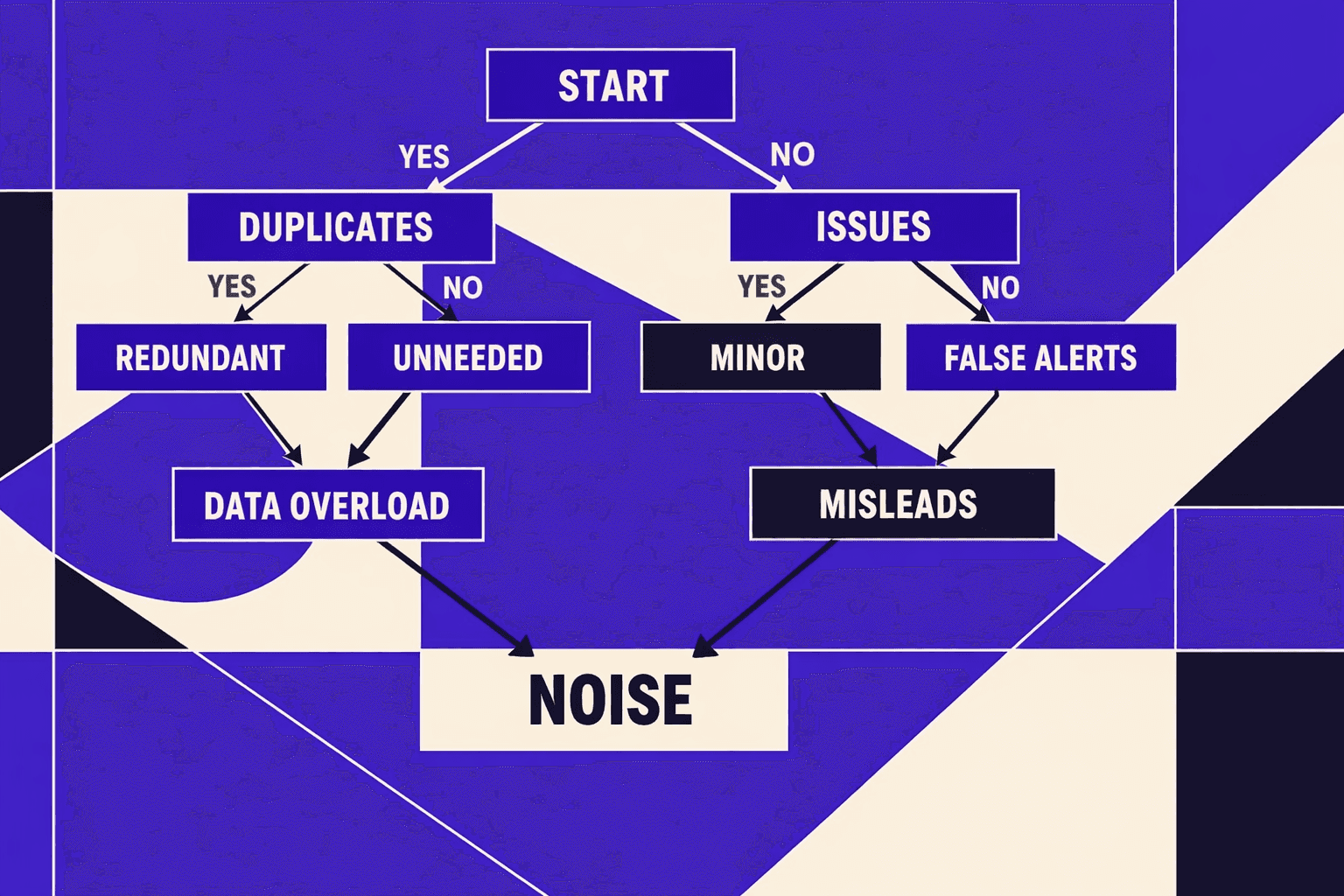

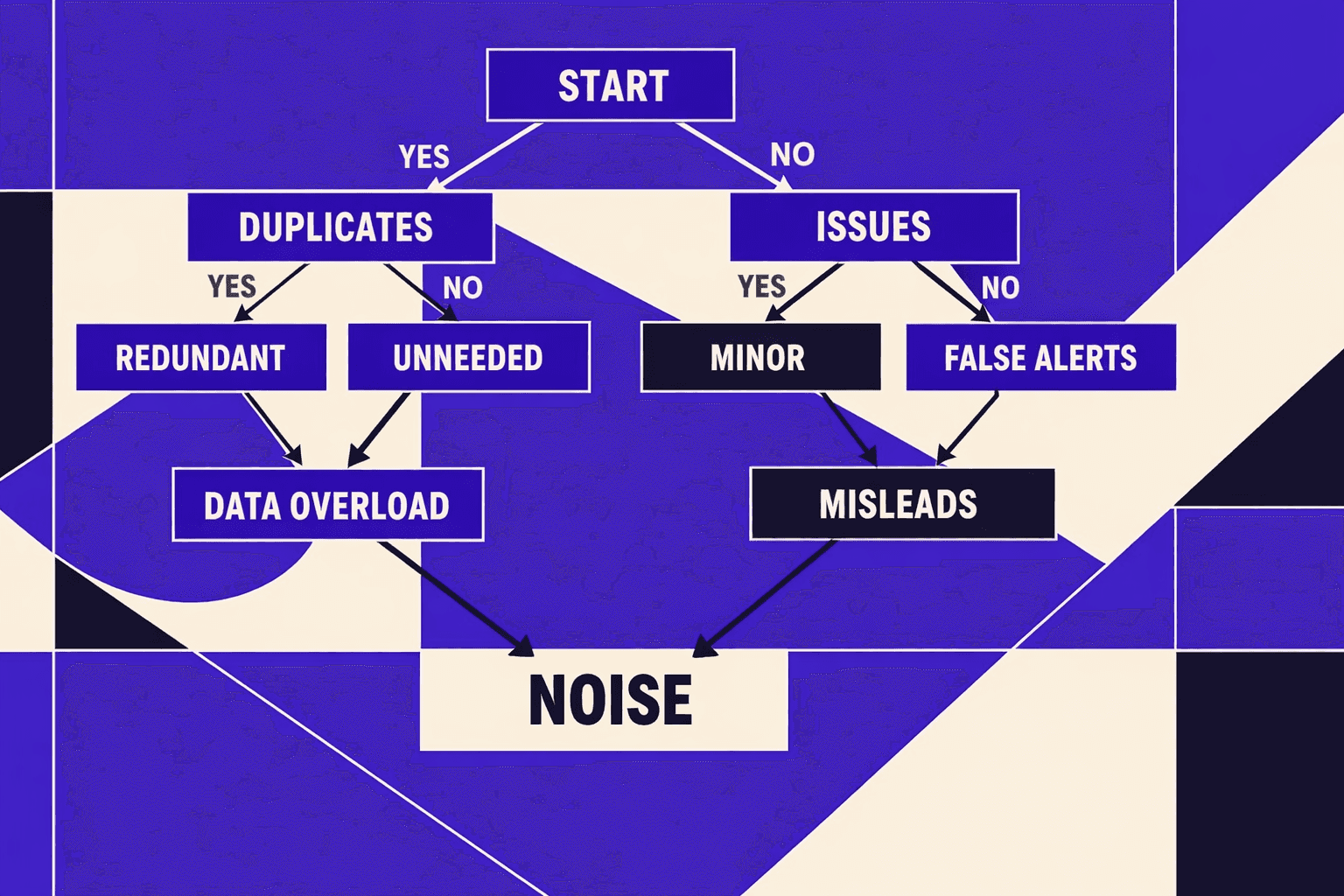

Current State: Why Most SEO Audit Tools Create Noise

1. Checklists scale but accountability does not

I keep seeing the same failure mode.

A tool exports 300 "issues" and calls it an audit.

But it never assigns an owner, an SLA, or a test.

Peter Rota's research on audit failure modes confirms what we see in production: the hard part isn't discovery, it's turning findings into shipped work (LinkedIn).

One moment still stings.

We had 47 tabs open, arguing about “duplicate titles.”

Meanwhile, a noindex rule blocked a whole template.

If we can’t tie a finding to traffic, revenue, or risk, it’s trivia.

2. Technical SEO is now a systems problem not a keyword problem

Modern sites don’t lose because they “missed keywords.”

They lose because the system leaks.

Crawl budget control breaks. Indexability governance gets sloppy.

Templates drift across teams and releases.

Enterprise auditing guides keep circling this reality.

You need repeatable checks across templates and deployments, not a one-off PDF (Screaming Frog).

That’s why our audits focus on control points.

Rendering, canonicals, parameter rules, sitemaps, and internal paths.

This is also where “SEO transparency” matters.

If we can’t verify a fix after deploy, we’re guessing (Swydo).

For deeper internal link failure patterns, I point teams to Why Most SEO Audit Tools Miss Internal Linking Problems.

3. Why seo checker free outputs mislead stakeholders

Aseo checker freescan helps for quick spot checks.

I still use them when I need a fast sanity test.

But they rarely model rendering, link equity flow, or indexation behavior.

So stakeholders leave with “urgent errors” that don’t change outcomes.

Can we run a technical seo audit for free and still get reliable answers?

Yes - for narrow questions with clear verification.

No - for large sites, JS rendering, or index governance.

Pricing pressure makes this worse.

Most enterprise tools start at $99/month - Screaming Frog at $189, with entry plans around $130 according to XOVI's 2025 research.

Cheap detection is easy.

Prioritization, ownership, and verification is the real product.

That’s the difference between “noise” and a technical SEO roadmap.

Our Perspective: We Built An SEO Audit Tool For Shipping Fixes

Design goal: One source of truth for indexability

Most teams pick anseo audit toolbased on reports.

Engineers pick it based on trust, scope, and repeatability.

The industry treats audits as snapshots. That's the core failure. A single crawl gives you a moment in time - but production sites mutate hourly. The only real solution is continuous verification: detect, assign, fix, verify, measure. When we committed to that loop, it forced an uncomfortable choice: indexability needed one source of truth, not competing spreadsheets.

So we merged inputs that disagree on purpose.

Crawling, rendering, Search Console, log sampling, and template signals sit side by side.

That mix blocks single-source bias during a technical seo audit.

Design goal: Evidence engineers can trust

I remember our first production run vividly.

Search Console claimed 400 pages were indexed. Our crawler found 287. The log sample showed partial hits.

Three tools, three truths - and we had no way to decide which one was real.

That was the moment we stopped chasing “more findings.”

We started chasing proofs an engineer can reproduce.

We normalize everything into five buckets that executives understand fast:

- Indexability

- Crawl efficiency

- Information architecture

- Performance

- Duplication

Then we attach evidence that survives code review.

Rendered HTML deltas. Canonical chains. Status history. Log hits. Template owners.

Design goal SEO transparency from finding to release

Tools fail when they hide the why.

Or they hide the path to “done.”

Some teams argue a seo checker free is enough.

It is - for spot checks and quick hygiene.

But the minute you need release-grade accountability, you need traceability.

Pricing also tricks buyers into the wrong behavior.

According to Best SEO Audit Tools for 2025: What Really Works (and Who It's For), some scans start at$19.

Research from Best SEO Audit Tools for 2025: What Really Works (and Who It's For) shows others begin at$39.

And Best SEO Audit Tools for 2025: What Really Works (and Who It's For) notes Pro plans can start at$69/year.

Low entry price often buys shallow exports.

Limited exports protect a business model, not your backlog.

That is why we treat seo transparency as a product requirement.

How we implemented it crawl render log correlate verify

We implemented the pipeline in five verbs.

Crawl, render, log, correlate, verify.

Crawl gives coverage, but not browser truth.

Render shows what Googlebot sees on real templates.

Logs tell us what crawlers actually requested.

Correlation is where we win engineering trust.

We connect URLs to templates, deploys, and owners.

Verification closes the loop after the merge.

Finally, we ship outputs where engineers live:

- Tickets with repro steps and expected vs actual behavior

- Affected URLs with the template or route pattern

- Verification queries to confirm the fix post-release

If you want anseo audit toolengineers will use, pick one that ships work.

Not one that ships PDFs. For deeper context, I laid out our approach in AI SEO Audit Tools Drive Technical SEO Results for Modern Teams.

Evidence: Our Results When Audits Become Product Work

1. What we measure beyond error counts

When we treat a technical seo audit as product work, we track outcomes, not findings.

Error counts feel precise, but they don't map to search behavior.

We anchor reporting to four metrics that engineering can move.

Valid indexed growth. Crawl waste reduction. Template coverage. Query/page alignment shifts.

If a fix doesn’t change those curves, it’s not a win.

It’s just a cleaner spreadsheet.

2. Client impact patterns we see repeatedly

The biggest lifts rarely come from “more pages.”

They come from fewer, better pages.

We keep seeing the same three patterns.

First, teams remove index bloat that never deserved to rank.

Second, they fix canonical and robots contradictions that split signals.

Third, they shorten internal link paths to revenue pages.

That last one looks simple on paper.

In production, it’s where politics and nav constraints collide.

It’s also why I care so much about seo transparency over pretty dashboards.

Peter Rota’s point about audits failing to deliver results resonates here, because the hard part is execution, not discovery (LinkedIn post).

3. Example wins indexation cleanup internal linking and CWV

I still remember one release night.

We had one tab open for the crawl, one for renders, and one for headers.

The team pushed a template change, and we watched URLs flip states.

Indexation cleanup was the first win.

We noindexed thin faceted pages and killed parameter traps.

Then we consolidated canonicals to a single, stable version per template.

That matters because most URLs never earn traction.

Research from Ahrefs shows 96.55% of pages get zero organic traffic, largely due to weak backlink profiles.

So we stop funding pages that can't win - and redirect resources to the 3.45% that can.

Internal linking was the second win.

We moved key category and product pages closer to the root.

We also cleaned anchor intent to match queries.

CWV was the third win.

We reduced layout shifts on templated pages and removed blocking scripts.

We didn’t chase perfect scores. We chased stable user experience.

This is also where a good seo audit tool earns its keep.

Not by flagging issues, but by proving the deltas.

4. How we validate that a fix worked

Verification is non-negotiable.

We re-crawl after release, with the same scope.

We re-render key templates, not just HTML fetches.

Then we confirm headers at scale.

Status codes. Canonical targets. X-Robots-Tag. Cache behavior.

After that, we cross-check GSC deltas for the affected URL sets.

We also report in a way leaders can fund.

Impact estimate, effort range, dependencies, and time-to-value.

Swydo’s audit framework aligns with that leadership lens (Swydo).

One more point leaders ask me every quarter: how often should we run an seo audit tool on a changing website?

For fast-moving sites, we run focused checks weekly and a deeper crawl monthly.

For slower sites, monthly checks and a quarterly deep audit usually holds.

The north star is simple.

If your templates ship weekly, your audits must too.

If only 3.45% of pages capture meaningful traffic, as Screaming Frog highlights, you can’t afford blind spots.

If internal linking keeps surprising you, go deeper here: Why Most SEO Audit Tools Miss Internal Linking Problems.

Counterarguments: What This Means Next

Free checkers still have a role. We use them too, as fast hygiene scans. But they fall apart where modern technical SEO lives: JavaScript rendering, sitewide template changes, and release-by-release verification. They rarely prove what Googlebot actually receives. They don’t reconcile crawl behavior with indexability. And they can’t tell you if a fix stayed fixed after the next deploy. That gap isn’t academic - it’s operational risk.

Here’s the shift I see heading deeper into 2026: the competitive advantage won’t come from finding more issues. It will come from running a continuous technical seo audit loop that behaves like product quality. That means checks tied to deployments, owners tied to systems, and verification tied to acceptance criteria. When a canonical rule breaks, the right team should know that day. When rendering changes, we should catch the delta before traffic does. If you can’t attach ownership and a repeatable test, you don’t have an audit. You have a backlog.

What we’ve achieved with our seo audit tool is not “more data.” We’ve turned audits into shipped fixes with provable outcomes: fewer regressions, faster time-to-verify after releases, and cleaner alignment between engineering work and search impact. We’ve also reduced the handoff tax - less manual triage, fewer meetings to explain basics, and more confidence when stakeholders ask, “Did we actually fix it?”

My recommendation is direct:

- Standardize your audit loop. Make it a system, not an event.

- Define severity by business impact, not by tool labels.

- Publish a transparency dashboard that stakeholders can self-serve.

If you’re tired of audits that end as PDFs and reopen as incidents, it’s time to instrument your technical SEO like you instrument uptime. Ready to build that loop with us? Learn More and let’s talk through your deployment reality.