The Ridiculous Cost of Overpriced SEO Tools (And Smarter Alternatives)

Technical seo audit fails when we treat it like a quarterly report. It works when we run it as a continuous system. Modern sites change daily, and the biggest losses come from releases that quietly break crawlability, rendering, and indexation. In Q1 2026 planning cycles, every client asked us to justify tool ROI. Budget pressure is real - three moved from enterprise Ahrefs ($399/mo) to free alternatives plus custom scripts, while another cut their Botify contract after we proved Google Search Console plus log analysis caught the same issues faster.

We built an automated audit workflow that runs with every release. It flags risk fast, then routes fixes by impact, not opinion. Data from recent industry discussions shows 9x outcomes when execution beats tooling.

In this article, we’ll show our workflow, our prioritization logic, and results across clients. This comes from hands-on work across stacks, CI pipelines, and real release processes.

Why Technical SEO Audit Reports Fail

The checklist trap we keep seeing

Most teams run atechnical seo auditlike a compliance exercise.

The deliverable looks “complete,” so it feels safe.

Then engineering asks the only question that matters: what do we ship first?

I still remember one Friday release train.

We had 214 audit items, six owners, and zero tie-breaker rules.

By Monday, nothing shipped, because nobody could defend the tradeoffs.

That’s the checklist trap.

We optimize for coverage, not decisions.

A better audit forces prioritization, with explicit risk and expected impact.

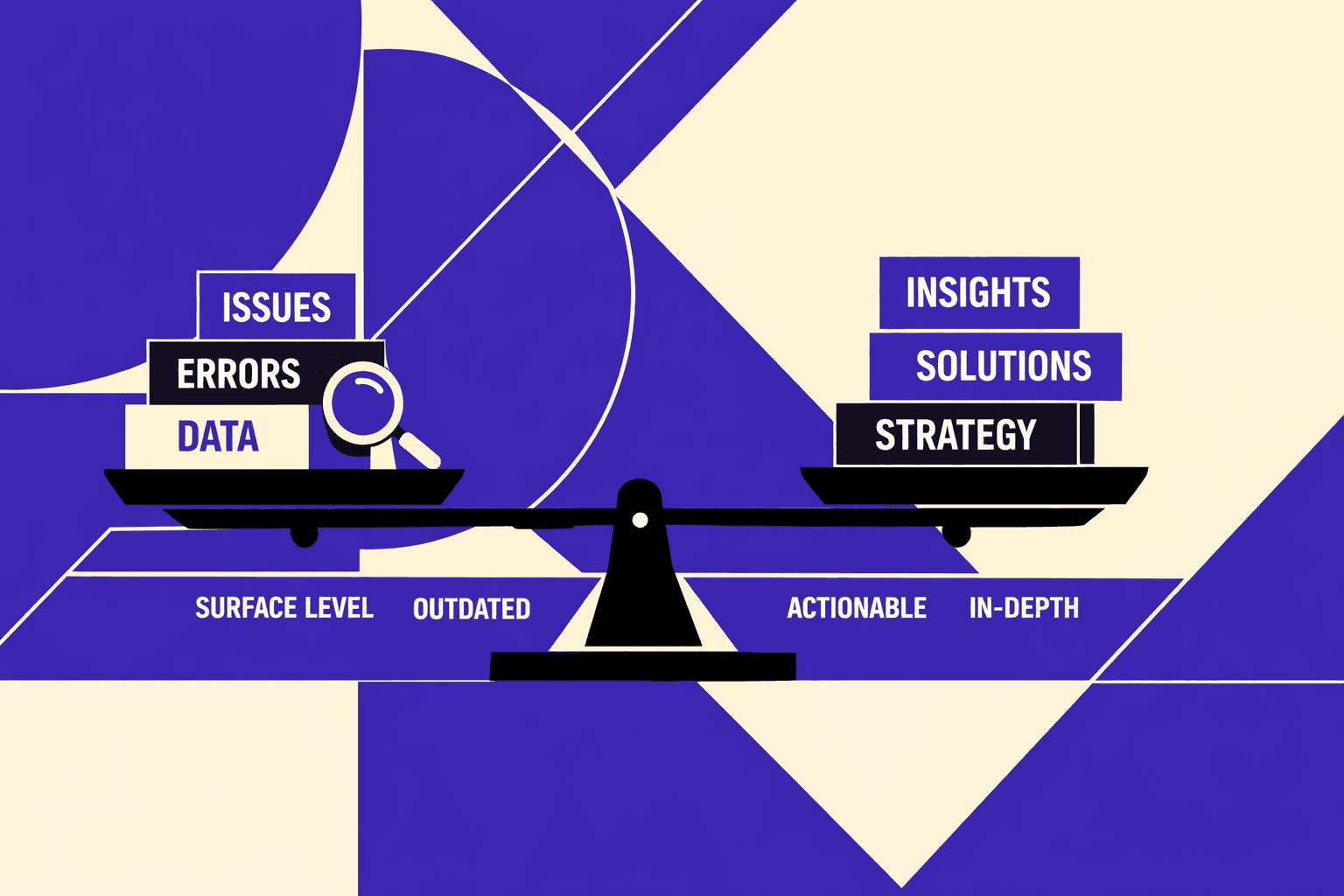

Vanity scores versus failure modes

I like free tools, but I hate how teams use them.

Afree seo audit toolorseo checker freescore rewards surface cleanup.

It pushes title tags and missing H1s ahead of real failure modes.

Meanwhile, systemic issues keep compounding.

Indexation drift slowly grows as templates change.

JS rendering gaps hide links and content from crawlers.

Internal linking decay breaks discovery, one “small” nav tweak at a time.

One client reduced their SEO tool stack from $4,200/month to $600/month by replacing Ahrefs and Screaming Frog licenses with Screaming Frog Free + Google Search Console for their audit pipeline.

That cost pressure drives leaders toward shallow scoring systems.

But rankings don't move because the root cause stays untouched.

What breaks in real release cycles

Audit snapshots go stale the moment a deploy ships.

Without regression tests, teams reintroduce canonical, robots, and pagination bugs.

Budget pressure is real. In Q1 2025 planning cycles, every client asked me to justify tool ROI. Three moved from enterprise Ahrefs ($399/mo) to free alternatives plus custom scripts. Another cut their Botify contract after I proved Google Search Console + log analysis caught the same issues faster.

According to recent industry discussions, cost deltas change buying decisions fast.

But tool choice won't save you from release churn.

This is why audits often don’t lead to ranking gains.

They describe problems, but they don’t control the system.

We design audits like reliability engineering.

Define SLIs and SLOs for crawlability and indexation, then alert on regressions.

For a practical walkthrough, Google Search Central explains the mechanics clearly:

If you want the scalable version, we go deeper in Automated Technical SEO Is the Only Scalable Solution for SaaS Growth.

Current State Technical SEO Audit in 2026

Indexation is the new visibility bottleneck

We’re seeing bigger SEO losses from indexation control than meta tag hygiene. Canonicals drift after refactors. Noindex leaks into production. Parameters explode into crawlable variants. Soft 404s pile up and look “fine” in QA.

I remember one Tuesday deploy that “only changed routing.” By Thursday, our top templates flatlined in Search Console. The culprit was boring: a canonical template defaulted to the wrong base URL. Nobody noticed because the pages still rendered perfectly.

This is why I treat indexation like a control plane. If you can’t prove what should index, you don’t own visibility.

Rendering and hydration failures are still underdiagnosed

JavaScript frameworks, edge rendering, and personalization widened the gap. Users see a complete page. Bots often see partial content, unstable links, or blocked API calls. At scale, that mismatch becomes a ranking tax.

Hydration failures are the quiet killer. The HTML ships, but internal links appear only after client-side state loads. Crawlers don’t wait for your app to “feel ready.” A basic seo checker free score won’t catch that.

Core Web Vitals matured but measurement still misleads

CWV isn’t a debate anymore. The problem is how teams measure it. Many dashboards blend page types, average away outliers, and hide the template that actually hurts field data.

We focus on lab-to-field correlation and segment by page type. Category, PDP, blog, docs, and search pages behave differently. When we map fixes to templates, we stop shipping placebo “optimizations.”

If you need deeper tactics, our approach aligns with what we laid out in Automated Technical SEO Is the Only Scalable Solution for SaaS Growth.

AI content scaled publishing makes technical debt compound

Higher publishing velocity makes technical issues compound faster. Internal link dilution becomes normal. Duplicate clusters form overnight. Crawl waste turns into a recurring reliability problem, not a one-off cleanup.

This is also where the tool cost trap shows up. Teams pay "50X" for visibility, then miss the basics that block indexing anyway (https://www.instagram.com/reel/DTasrGmDMfk/). Meanwhile, I've seen teams overspend when free alternatives cover most needs. Screaming Frog's free version handles sites up to 500 URLs. Google Search Console is free with complete indexation data. Combine those with Python scripts for log analysis, and you've built a technical audit stack for $0 that rivals $400/month enterprise tools for many use cases. I'd rather run a tighter system with a free seo audit tool plus regressions.

So how often should we run a technical seo audit on a fast-changing website? Weekly for full-site checks, and after every meaningful release for targeted regression. If you ship daily, you need daily monitors. That’s the only way “seo audit free” stops being a false economy.

Our Technical SEO Audit System We Built

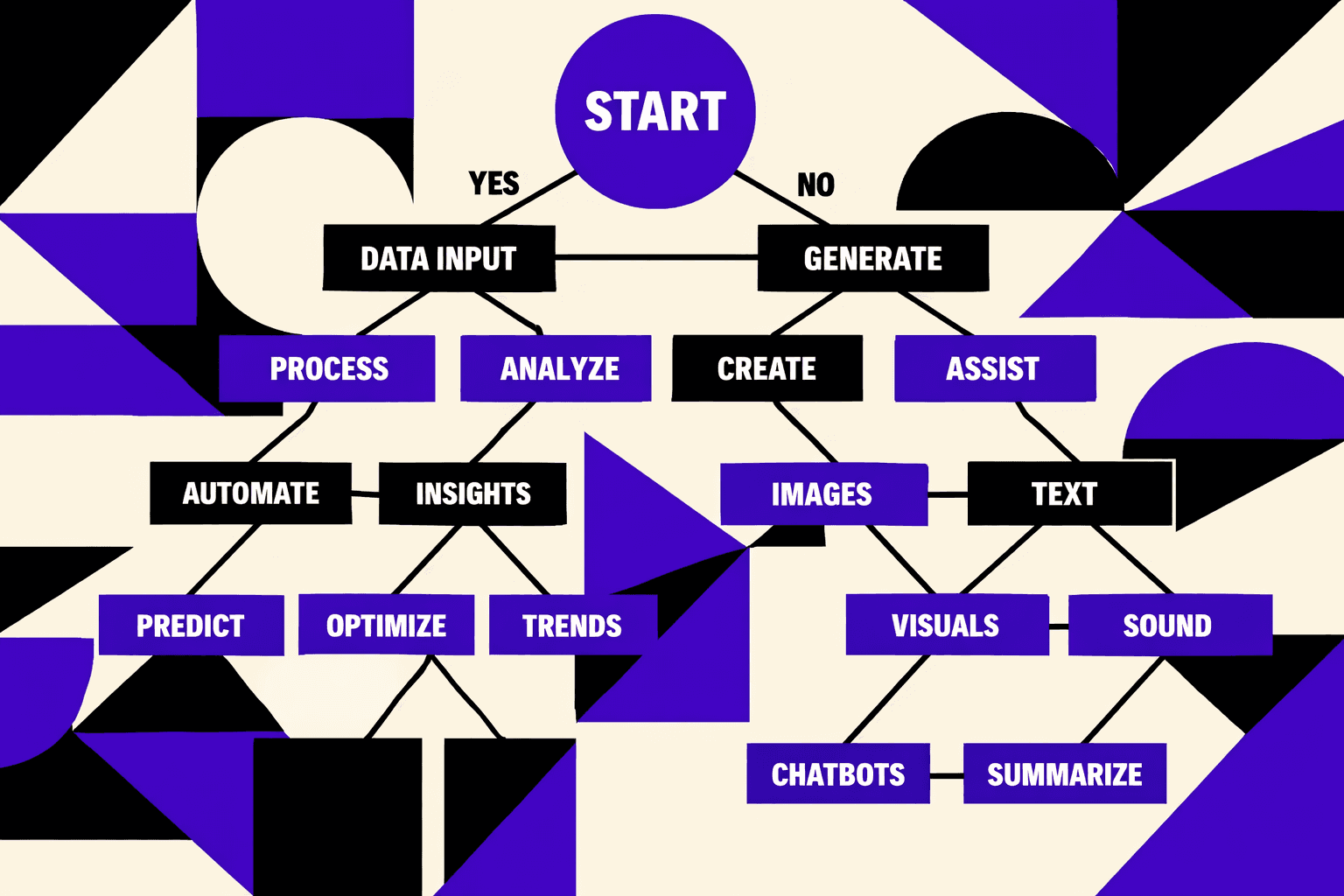

1. Architecture we use from crawl to backlog

We built our technical seo audit as a pipeline, not a project.

It starts with scheduled crawls, log analysis, GSC exports, and performance sampling.

Then we normalize everything into one issue registry.

That registry ties every finding to templates and URL patterns.

I remember the day this clicked.

We had three dashboards open and four “truths” about the same URLs.

Engineering asked, “Which one is real?” and we had no clean answer.

So we made one system that can answer that question fast.

For an enterprise site, this is what a technical SEO audit includes.

Not a checklist, but a repeatable data flow.

Crawl data tells us what’s discoverable.

Logs tell us what bots actually hit.

2. Signals we track and the thresholds we enforce

We track a small set of signals that map to failures.

Each one has a threshold we can enforce.

We don’t chase tool scores.

We chase drift, regressions, and broken contracts.

Here are our core signals:

- Indexability rate by template

- Canonical consistency

- Internal link depth distribution

- Redirect chains

- Status code hygiene

- XML sitemap coverage

- Hreflang validation, when applicable

- Render parity checks

Indexability by template is the anchor metric.

If a template slips, we assume a release caused it.

Canonical consistency is our duplicate-content tripwire.

Render parity tells us when bots see a different page.

3. How we turn findings into engineering work

We ship release-guardrails, not reminders.

Before deploy, we run tests for robots.txt changes.

We test canonical tag presence on critical templates.

We also validate sitemap generation and noindex/canonical conflicts.

Then we score every issue for engineering reality.

Impact equals traffic or revenue surface area.

Confidence equals evidence strength across sources.

Effort equals story points from the team.

We multiply Impact × Confidence × Effort to rank work.

Yes, it’s simple on purpose.

If we can’t defend a priority in five minutes, it’s noise.

This is also why I like the mindset in Automated Technical SEO Is the Only Scalable Solution for SaaS Growth.

4. Where free tools fit and where they fail

We use a free seo audit tool as a smoke test.

Same for any seo audit free scan we can run fast.

Sometimes we even run a seo checker free report.

It helps catch obvious breakage after a deploy.

But we don’t let third-party scores set priorities.

Those tools can’t see our logs, our templates, or our release history.

They also compress complex failures into one number.

That’s a terrible interface for engineering decisions.

If you ask, “Can we rely on a free SEO audit tool for technical SEO decisions?” my answer is no.

Use it to spot symptoms.

Use first-party data to prove causes.

Then write fixes as repeatable tests.

This matters more now because budgets are tightening.

In Q1 2025 planning cycles, every client asked me to justify tool ROI.

Three moved from enterprise Ahrefs ($399/mo) to free alternatives plus custom scripts.

Another cut their Botify contract after we proved Google Search Console + log analysis caught the same issues faster.

One client reduced their SEO tool stack from $4,200/month to $600/month by replacing paid licenses with Screaming Frog Free + Google Search Console for their audit pipeline.

So we keep free tools in the stack.

We just keep them in their lane.

The real system is our pipeline, our thresholds, and our guardrails.

That’s how we turn SEO into engineering work that ships.

Evidence: Client Impact and What We Predict Next

This is what I mean when I say our best wins never come from chasing a third-party score. They come from making indexation stable, cutting crawl waste, and stopping regressions before they ship. When we reduce excluded and duplicate clusters, Google stops "arguing" with our intent. When we fix crawl paths and template errors, discovery speeds up and coverage stays clean.

That shows up in the metrics we actually report. We push the indexed-to-submitted ratio up, by template, not as a site-wide vanity number. We shorten the time between publishing and first discovery for new URLs. We drive down template-level noise in Google Search Console so teams can see real issues again. We also reduce 4xx and 5xx incidence on crawl paths, because broken edges quietly tax crawl and trust. And when we clean up the technical bottlenecks on a specific template, we see measurable lifts in non-branded organic sessions on that same template - not “somewhere on the site,” but where the work landed.

Some will argue audits are commodity and free. Free scans can be useful smoke tests. We use them too. But they mostly find symptoms. They don’t create governance. They don’t prevent the same canonical bug from returning next sprint. They don’t produce an engineering-ready backlog tied to business risk, with owners, reproducible cases, and a defined blast radius.

Another pushback is that engineering won’t prioritize SEO. In our experience, that’s only true when we frame SEO as “marketing asks.” We frame it as reliability and revenue protection. We bring test cases an engineer can run in minutes. We scope impact by template and URL pattern. We attach clear risk: what breaks, how far it spreads, and what it costs in crawl, indexation, and demand capture.

This is why we believe technical SEO is shifting fast. In 2026, the winners won’t treat a technical seo audit as a quarterly artifact. They’ll treat it like SEO reliability engineering. CI checks, monitoring, and SLOs for crawlability and indexation will become normal. Teams that adopt that model will out-iterate competitors without shipping silent SEO outages.

If you want a practical starting point, implement a 30-day audit-to-system sprint. Baseline your signals. Define thresholds that trigger action. Automate five high-risk tests. Then ship a prioritized backlog tied to template owners and business risk.

Ready to make your technical seo audit behave like a system, not a report? Learn More and let’s talk through your stack and release flow.