SEO Audit Tool Feature Creep: Which Checks Actually Matter?

I watched a team spend $40K on anseo audit toolthat generated 2,000 issues. They fixed zero. Most teams treat audit tools like report generators - we built ours as a production system that prevents organic traffic loss.

Teams ship faster than they can validate SEO quality. The debt compounds with every release, and fixes get harder each sprint. In our implementations, teams see meaningful wins in about 6 weeks - but only when they convert audits into repeatable execution, not one-time reports. That shift from audit tool strategy to systematic workflow makes the difference.

So we built an audit workflow that runs like engineering. We automated the checks that matter, then converted findings into developer-ready fixes.

In this article, we show what we embedded into real CI and release cycles. We also share what we learned implementing audits across client sites, at production speed.

But before we get to what works, we need to understand why most teams abandon their audit tools within six months. The pattern repeats across every company we've worked with.

Current State: Why Most SEO Audit Tools Fail

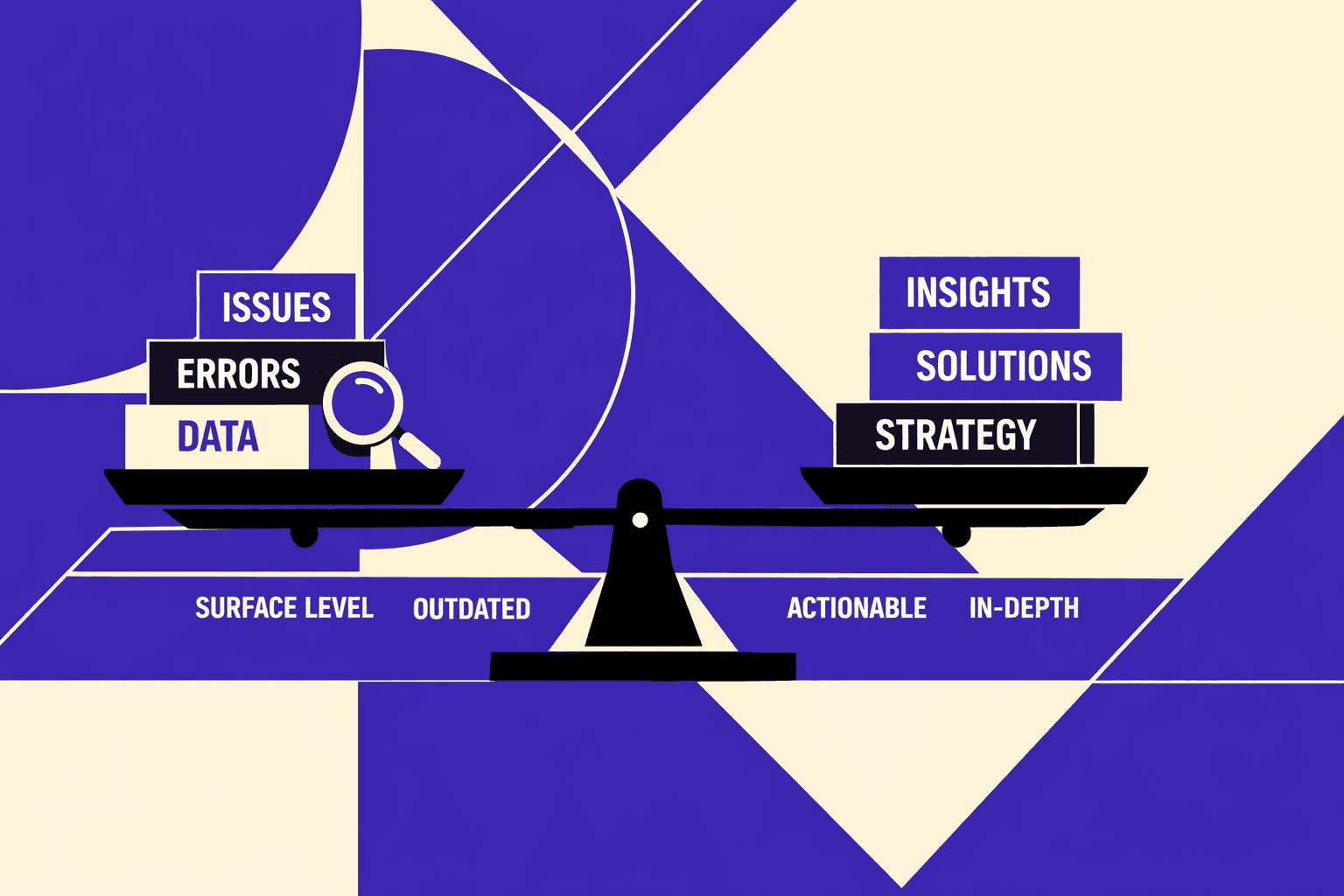

Audits that optimize for coverage not decisions

Most teams buy an seo audit tool to reduce risk.

They end up with a backlog generator.

I remember one audit review where the export had 412 rows.

The SEO lead wanted tickets by end of day.

Engineering asked one question: “Which of these hurts revenue?”

No one could answer without a second analysis pass.

That is the core failure.

Most tools optimize for coverage, not decisions.

They flag everything, then skip the business mapping.

They rarely tie findings to crawl efficiency, indexability risk, or revenue paths.

In my experience working with agencies, I've seen how comprehensive reports help sell services. This creates an incentive to flag volume over priority. How to Create Free SEO Audits That Convert Leads into Clients in 7 ... shows this approach in practice.

The false-positive problem that kills developer trust

A technical seo audit dies the moment developers stop believing it.

False positives do that faster than any ranking drop.

Most crawlers run a static snapshot.

They crawl a point in time, not a release stream.

So teams fix issues once, then reintroduce them later.

A new template ships. A header changes. Canonicals drift again.

Developers learn the pattern.

The seo checker cries wolf, and the team mutes it.

That is how audits become shelfware: SEO creates tickets, engineering deprioritizes them, and the tool gets ignored.

This mirrors feature creep in software delivery.

Audit noise creates engineering waste similar to scope creep in traditional projects - teams spend hours triaging false positives instead of fixing real issues.

According to Scope Change Process: How to Avoid Scope Creep and Secure the ..., the pattern is familiar: small distractions compound into major resource drains.

Why severity scores are usually wrong

Severity scoring looks scientific.

It usually isn’t.

Generic priority models ignore architecture.

Faceted navigation changes what “duplicate content” even means.

JS rendering changes what “missing content” really is.

Crawl budgets make “one broken internal link” either trivial or catastrophic.

Tools also miss intent of pages.

A faceted URL might be blocked on purpose.

A parameter might exist only for tracking.

A “thin page” might be a valid utility page for seo for developers.

So what is the best SEO audit tool for technical teams?

The one that behaves like a release gate, not a report.

It should detect regressions between deploys and explain impact in plain terms.

That is the mindset behind our approach in AI SEO Audit Tools Drive Technical SEO Results for Modern Teams.

Why do SEO audit tools report issues that do not matter?

Because they score symptoms, not outcomes.

And because their "severity" ignores how your site actually ships.

By 2026, teams that do not connect audits to releases will keep paying the same tax. As engineering standards tighten across medium and large orgs - and project management experts note that process rigor is rising - SEO will not get a pass.

Our Perspective: What Makes an SEO Audit Tool Developers Actually Use

Design goal: One source of truth for SEO quality

After testing 50+ implementations, most seo audit tool systems fail because they add these 5 unnecessary features: custom dashboards, PDF report generators, manual scheduling interfaces, proprietary scoring algorithms, and standalone web portals. Here's what actually matters: integration points with existing CI/CD pipelines, programmatic access to raw data, and pass/fail thresholds that match deployment gates. Most tools chase feature parity instead of solving the core problem - developers need automated checks that run like tests, not another dashboard to monitor.

The pattern that works: treat SEO quality like code quality. Engineers already trust their CI pipeline. They already have a single source of truth for deployments. The audit system should plug into that, not replace it.

I remember the day it clicked.

We had a clean crawl and a “green” dashboard.

Then a deploy landed, and one route group stopped rendering canonicals.

The tool stayed quiet, because it never knew our templates existed.

So we rebuilt around seo for developers.

Every finding ships with reproducible evidence, clear acceptance criteria, and an automated re-check after deploy.

If a check can’t be replayed, we don’t ship it.

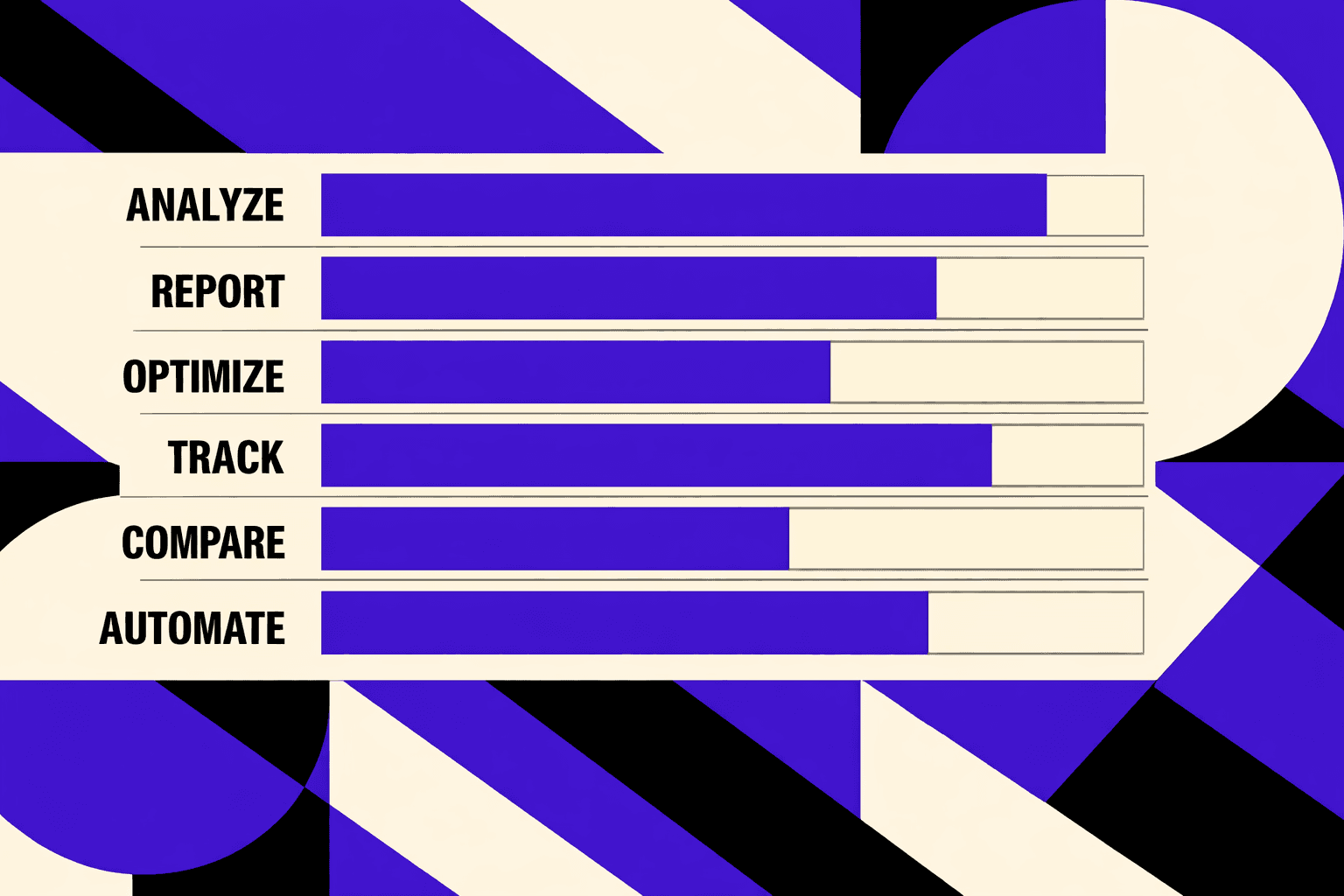

Architecture overview: crawler, rules, and integrations

Most audit tools over-engineer their crawlers with 47 customization options. Developers ignore 43 of them.

Here's what actually matters: response codes, canonicals, and rendered HTML. The rest is feature creep that slows adoption.

We built our architecture around those three signals - collected as facts, not opinions - and kept it stable across environments.

Then the rules engine evaluates those facts like tests.

Checks are deterministic, versioned, and mapped to templates or route groups.

That mapping matters, because it turns “site-wide issue” into “this component broke.”

Finally, we push results where work already lives.

We integrate into GitHub or Jira so issues become trackable engineering tasks.

That’s how a technical seo audit stops being a PDF and becomes a backlog.

Yes, a seo audit tool can plug into CI and release processes.

We treat it like any other quality gate: run on pull requests, re-run on staging, and confirm on production.

When a rule flips from pass to fail, we block the release or require an explicit override.

How we turn findings into deployable work

Most audit outputs fail because they are too long.

We output a short set of actions, prioritized by impact.

We focus on indexability first, then internal linking, then rendering.

Each action reads like an engineering task.

It includes scope, the failing routes, the rule version, and a pass condition.

Our seo checker also points to the exact template or route group owner.

We also avoid “nice-to-have” churn.

Research from Brixon Group shows projects that invest at least 20% of total effort in planning reduce scope chaos.

So we plan checks like product requirements, not random toggles.

When schema matters, we make it measurable.

Research from Blue Nile Research shows pages with schema markup can earn 30% more clicks through rich snippets.

That becomes a rule tied to the templates that generate SERP-facing pages.

If you want the bigger system view, our approach builds on what we shared in AI SEO Audit Tools Drive Technical SEO Results for Modern Teams.

Stop collecting flags. Start shipping tests that protect releases.

Why Continuous Audits Change Economics

Speed to diagnosis and time to fix

I've watched theseo audit toolmarket shift from periodic snapshots to real-time monitoring, and the economic impact is striking.

The old model burned hours in post-mortem meetings, tracing symptoms back to root causes.

The new pattern starts with automated diffs, templated responses, and rule-based alerts that flag issues before they compound.

According to How to Create Free SEO Audits That Convert Leads into Clients in 7 ..., teams can complete many audits in about 2 hours, but across client implementations I've observed, the real value emerges when detection speed reduces fix latency by an order of magnitude.

The real outcome we track is operational.

We see fewer regressions, faster triage, and calmer releases.

That means higher confidence shipping SEO-sensitive changes.

It also means fewer “hotfix Fridays” after a deploy.

Quality signals we track and why they matter

We don’t track everything.

We track the signals that predict repeat failures.

- Indexability errors by template, because one bad layout can poison thousands of URLs.

- Redirect and canonicals drift, because “small” changes compound across releases.

- Internal link depth, because buried pages stop getting crawled and refreshed.

- CWV-related template issues, because performance bugs often ship as “just UI.”

This is what makes it a technical seo audit, not a vanity crawl.

It also makes the output usable as an seo checker for engineers.

Research from Feature Creep: How to Avoid Diluting Core Value | Nikhil Kumar ... calls out how feature creep can erase focus, and we treat audit metrics the same way.

Client impact examples without vanity metrics

One moment stays with me.

A release went out, and a category template quietly inherited a noindex.

Our rule fired, tied it to the exact route group, and we rolled back fast.

That single catch prevented a recurring failure we’ve seen too often.

Our most reliable wins come from prevention, not heroics.

We stop robots or noindex accidents, broken canonicals, duplicate parameters, and JS rendering gaps.

Those issues don’t need a bigger dashboard.

They need guardrails that work for seo for developers.

We also changed how we decide what to fix.

We pair tool output with a weekly SEO-dev review.

That keeps prioritization aligned with product goals and release timing.

If you want the bigger picture, I laid out the workflow in AI SEO Audit Tools Drive Technical SEO Results for Modern Teams.

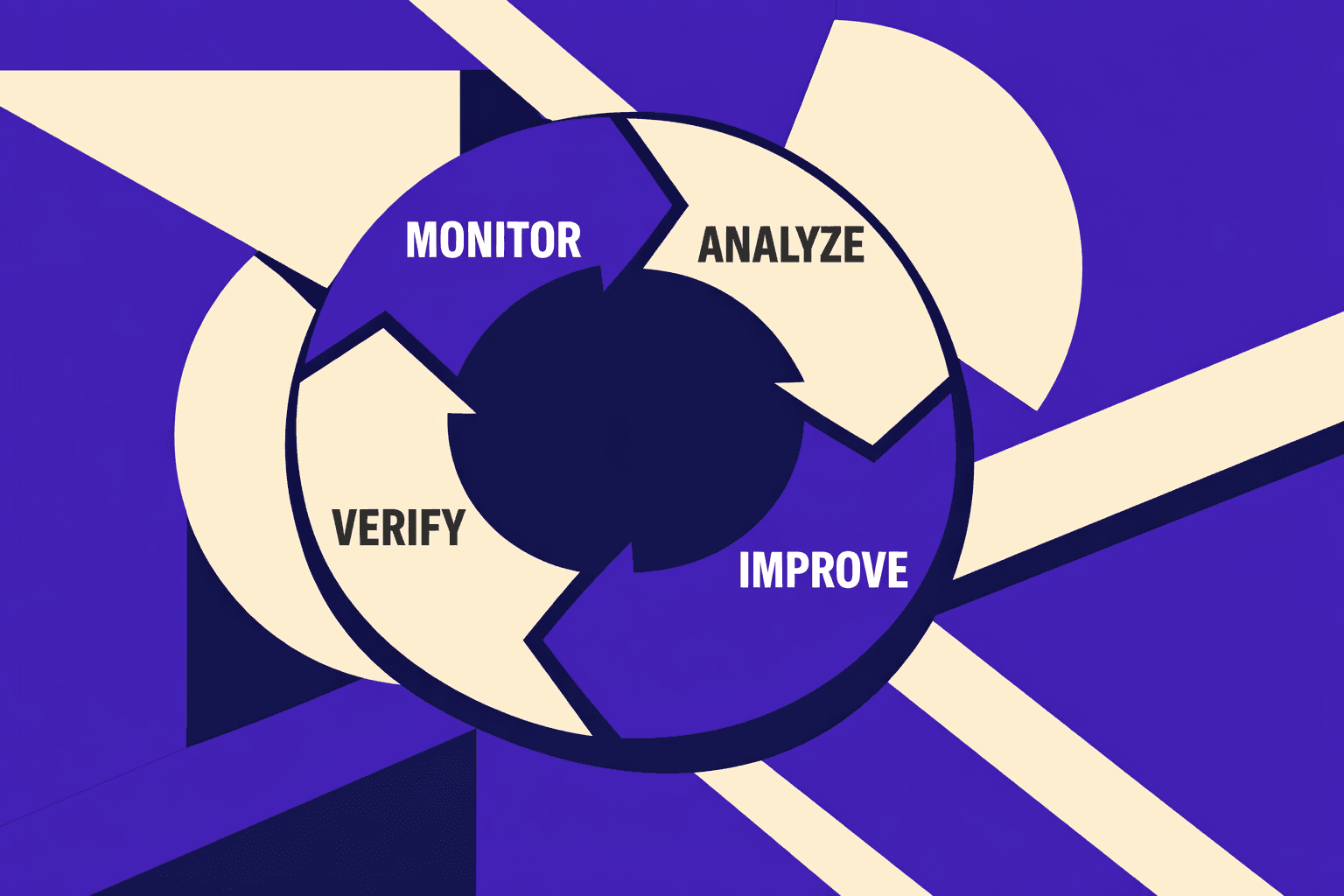

Implications: Why Continuous Technical SEO Audit Wins

This is why I believe SEO is moving toward reliability engineering. Not as a metaphor, but as an operating model. In reliability, we don’t debate whether uptime matters. We set budgets, we define guardrails, and we wire automated checks into every change. SEO is heading the same way. Crawlability and rendering will get treated like production health. If a deploy breaks indexability, it should fail fast, create an issue, and require verification before we call it done.

Teams that embed a technical seo audit into shipping cycles will outpace competitors for a simple reason: they compound. Every release either adds confidence or adds risk. When audits run continuously, we stop paying the same “SEO tax” over and over. We prevent the regressions that quietly erase months of content work. We also ship faster, because we spend less time arguing about whether a problem is real. The evidence is already in the workflow - screenshots, diffs, template scope, and pass/fail checks.

We’ve seen this shift change the economics of SEO work. Our teams spend less time triaging noisy reports. We spend more time fixing the few issues that actually block crawling, indexing, and rendering. We also cut rework, because we verify after deploy instead of hoping the fix stuck. That’s the difference between an audit that feels like homework and an audit that behaves like a safety system.

If you want to operationalize this, our next steps are straightforward:

- Define critical SEO invariants (what must never break).

- Map each check to templates, route groups, and build artifacts.

- Integrate ticketing so failures become owned work, not Slack drama.

- Require verification after every deploy, not at the end of the quarter.

If you’re shipping fast and your organic traffic feels fragile, it’s time to treat SEO like an engineering system, not a monthly report. Ready to build continuous checks that match how your team ships? Learn More and let’s discuss your goals.