Why Your Technical SEO Audit Needs to Start With AI Readiness

Most companies are using anseo audit toolbackwards. They celebrate exports, then ship the same roadmap. Anseo audit toolonly matters if it changes what we deploy next week.

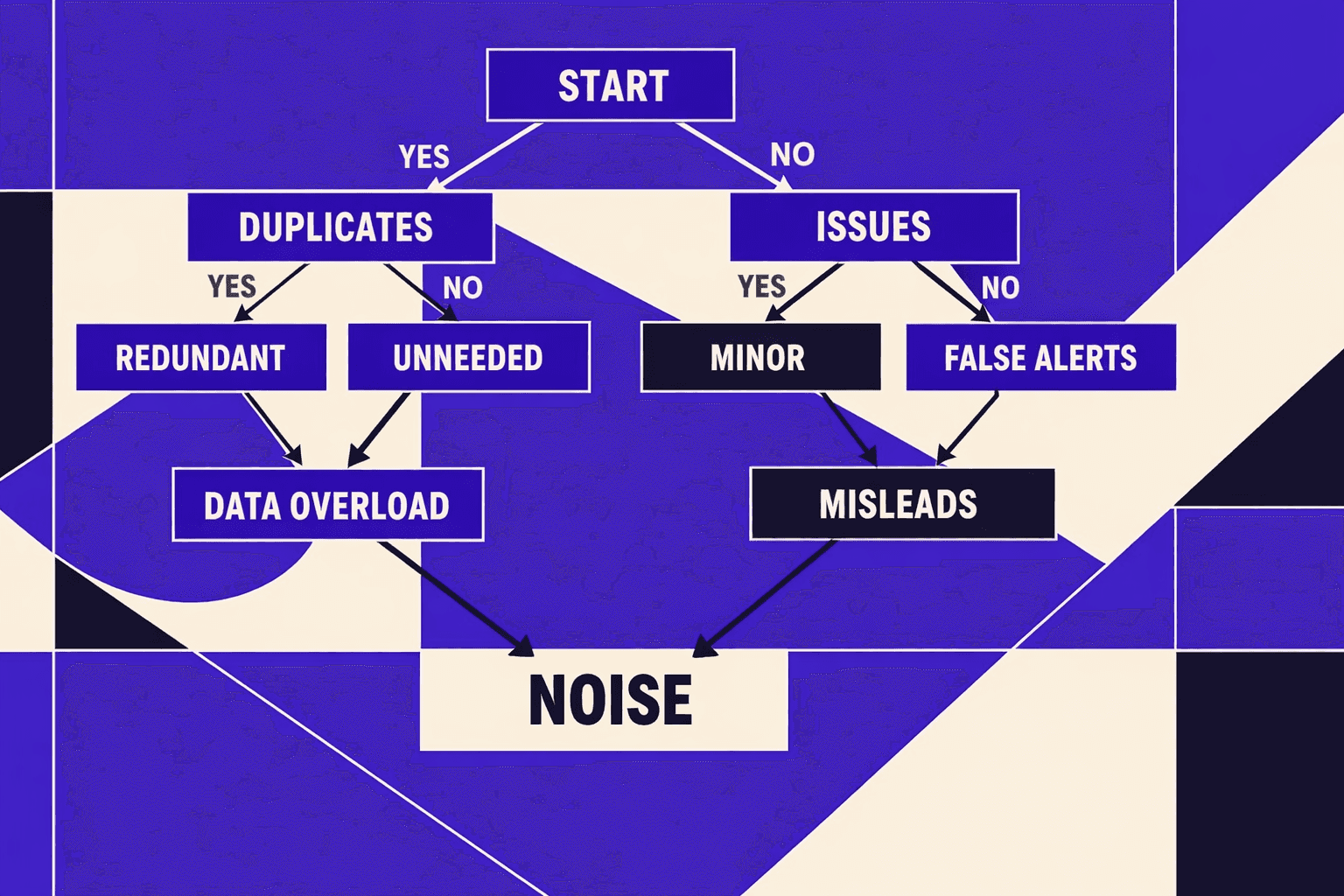

Meanwhile, teams drown in automated issues. Rankings slip, crawl budgets waste, and conversion paths break in quiet ways. According to How AI Actually Makes Technical SEO Better: Real Results from ..., AI can handle about 80% of technical SEO work. That volume creates noise, not clarity.

So we built an audit system at MygomSEO that ranks fixes by impact, not severity. A AI Technical SEO Audits: The New Frontier in Site Optimization case showed a 12% lift after targeted fixes, and we wanted that repeatable. In this playbook, we’ll show how we prioritize work, prove impact, and adapt to AI-driven search across modern stacks and client programs.

Why the SEO Audit Tool Market Optimizes For Reports

1. Current State What audits look like inside most teams

Most teams treat aseo audit toollike a PDF factory.

We export the findings, sort by “severity,” and call it a plan.

But nobody owns the fixes. Nobody sets deadlines.

So the backlog grows, and releases ship unchanged.

I remember one audit where we hit page 38.

A director asked, “Which five fixes move revenue this sprint?”

The report had no answer. It had labels, not leverage.

That’s the market default: exhaustive output over accountable execution.

A real technical seo audit should behave differently.

It should assign an owner, a due date, and a test.

It should also track impact after deployment.

If it can’t do that, it’s not an audit. It’s a list.

2. Why checklists miss crawl reality rendering and internal link equity

The conventional wisdom says “follow the checklist.”

We believe that’s backwards for modern stacks.

Today’s failures live between systems, not inside one tool.

Templates drift in the CMS. CDN rules rewrite headers. Deploy cycles delay fixes.

JavaScript rendering is where checklists go to die.

A crawler sees one DOM. A user sees another.

The tool flags “missing content,” but the real cause is hydration timing.

Then the team wastes days debating severity scores.

Internal links break the same way.

A nav component changes, and link equity shifts overnight.

No report catches that business context.

That’s why we wrote Why Most SEO Audit Tools Miss Internal Linking Problems after seeing it repeat.

3. The new constraint AI answers reward structure not volume

AI-driven discovery raises the bar.

For seo for ai search, structure beats bulk.

If a page can’t be parsed fast, it gets skipped.

If it isn’t linked well, it stays invisible.

This also exposes tool waste.

According to AI SEO Tools: How to Use AI for Faster, Smarter Optimization, teams use10%of tool features.

So we pay for noise, then ship none of it.

The new win condition is AI seo optimization with clear architecture.

Fast rendering. Stable templates. Clean internal paths.

How AI Actually Makes Technical SEO Better notes40%of consumers abandon pages that load slowly, which makes performance non-negotiable.

And AI Technical SEO Audits: The New Frontier in Site Optimization found that teams cut false positives from15%to 3% when audits fit real context.

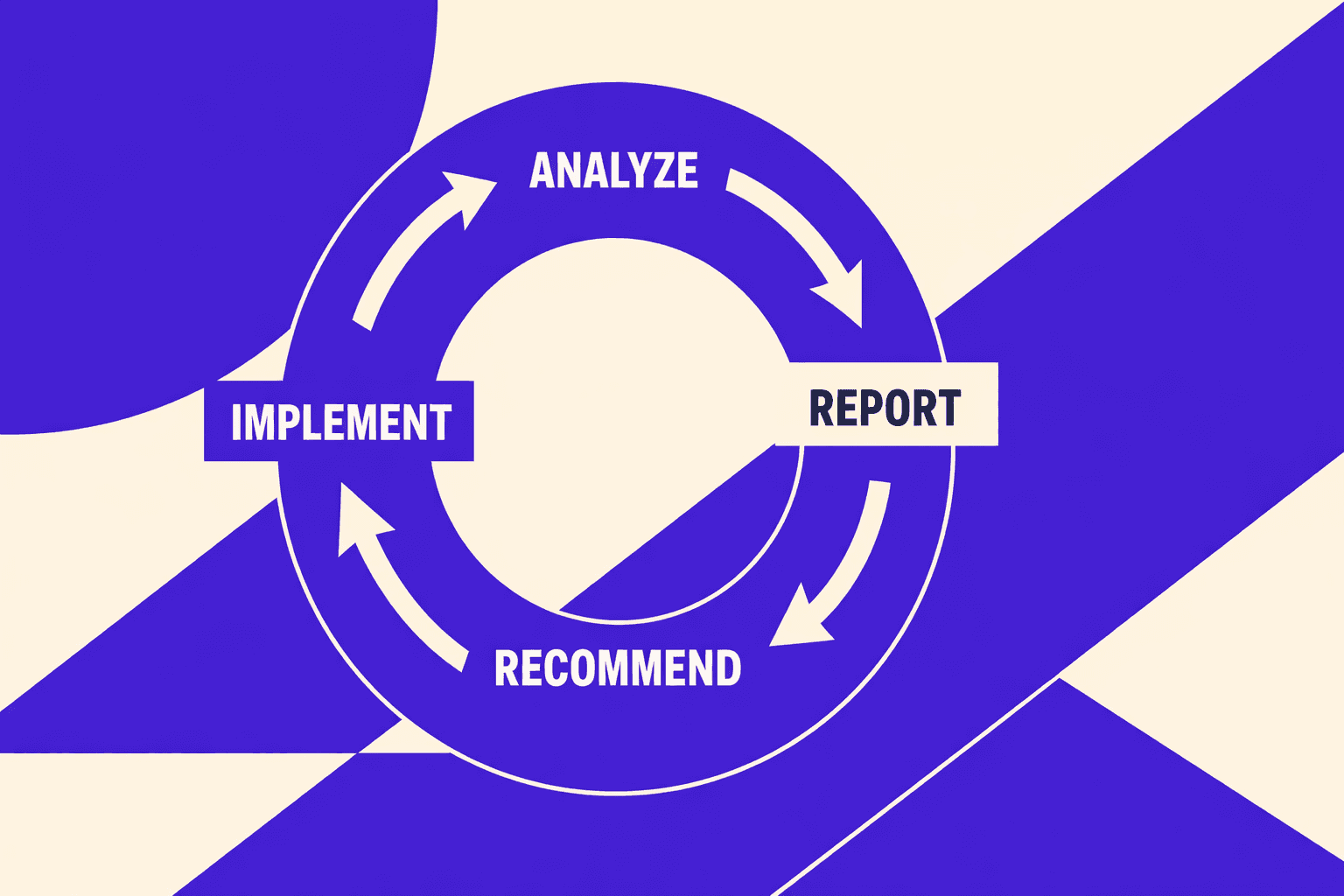

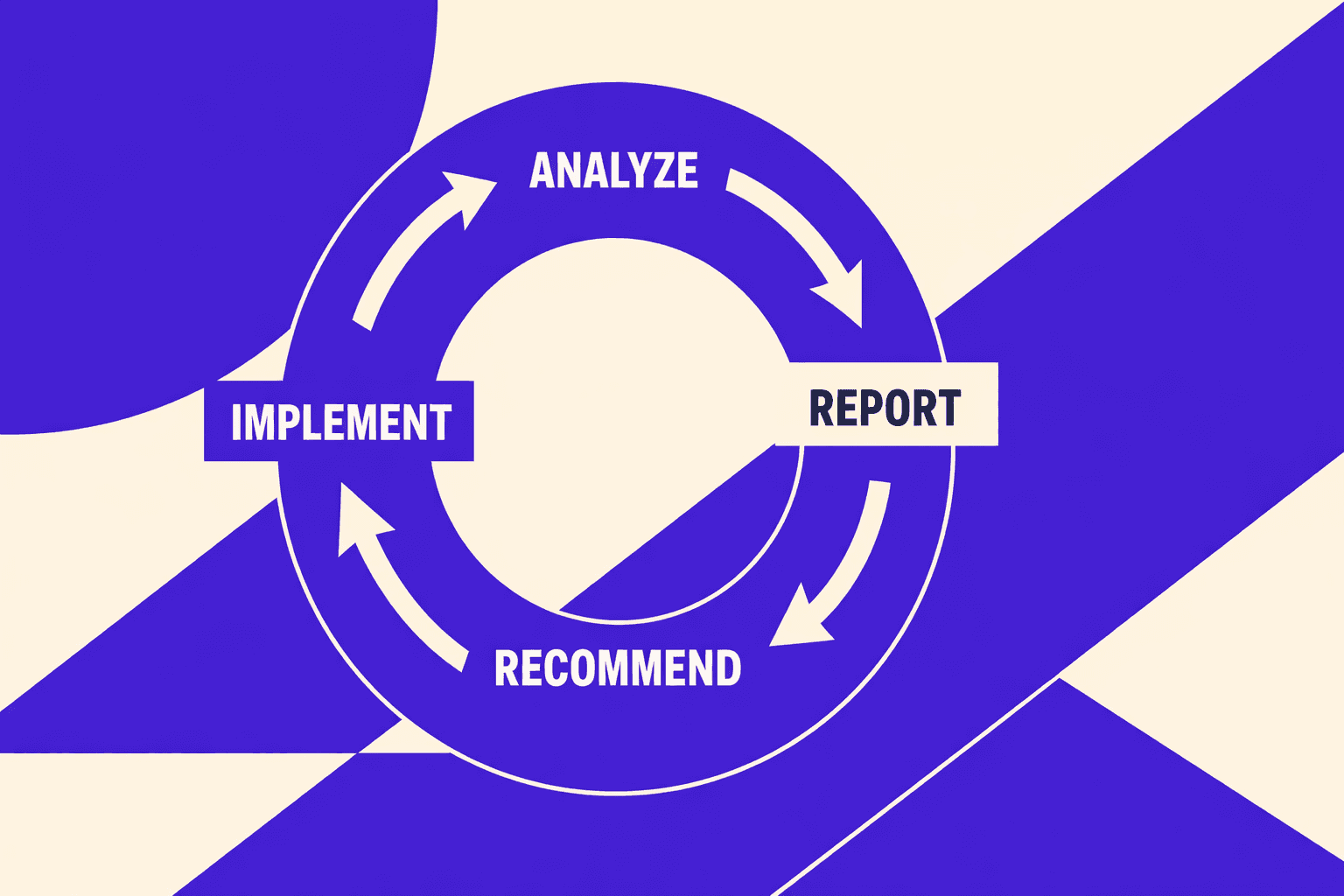

So how often should we run a technical SEO audit?

We run lightweight checks daily, tied to deploys.

We run a deeper review monthly, tied to roadmap decisions.

Anything slower can’t keep up with systems that change weekly.

Our Perspective: We Built an Audit Engine Not a Checklist

Design goal: One source of truth across crawl logs analytics and rankings

We built this engine around one rule: every finding becomes a ticket.

That ticket includes expected impact and a verification method.

Anything else is noise, even when it looks “critical.”

I remember our first real run on a JavaScript-heavy catalog.

The crawler flagged missing titles across thousands of URLs.

Server logs showed Googlebot barely touched those templates.

Analytics showed the same pages never converted anyway.

So we merged the feeds.

Crawler output (HTML plus rendered), server logs or edge telemetry, indexation signals, and template patterns.

The goal was one map we could trust.

That map drives our technical seo audit decisions, not gut feel.

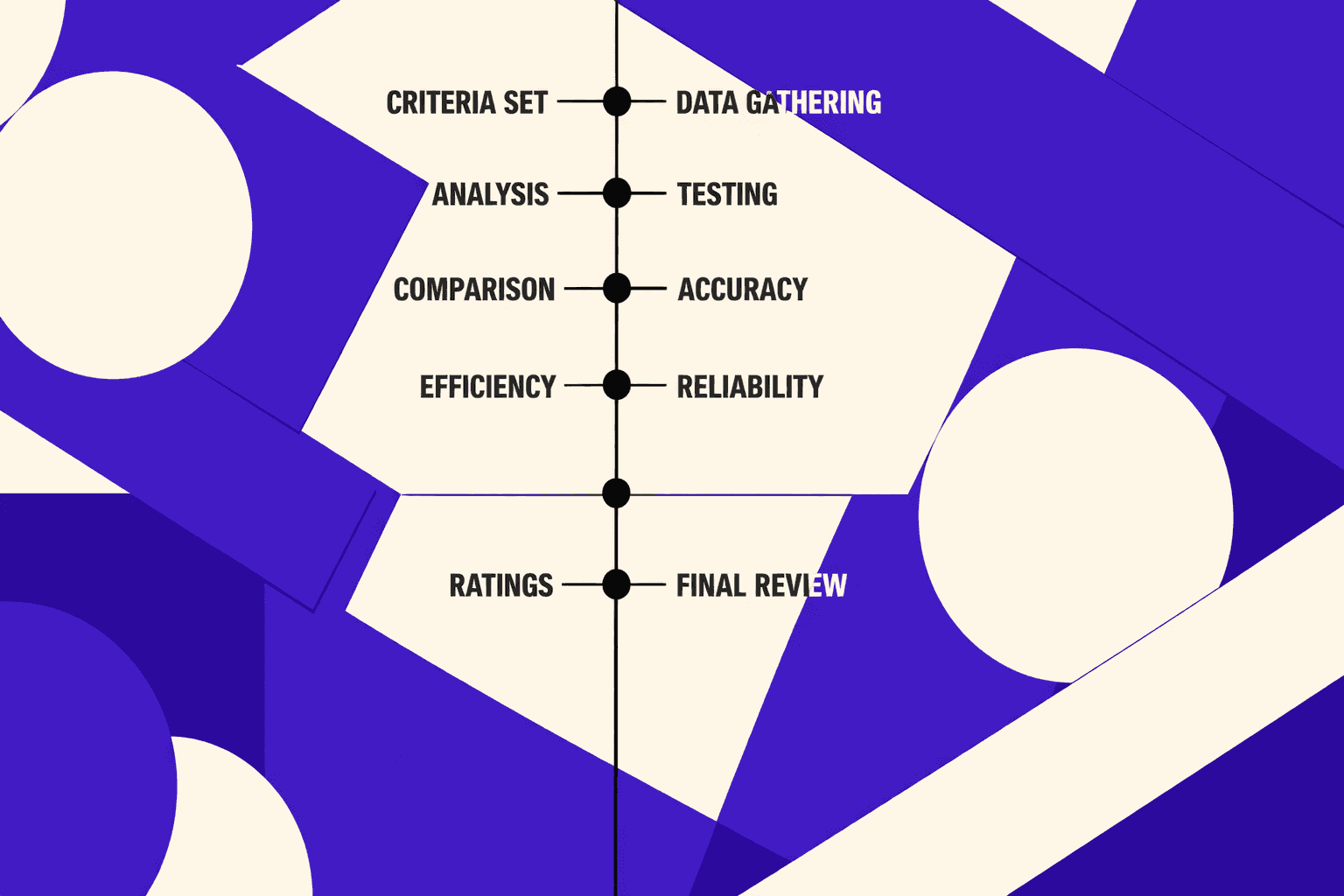

How we score issues by impact confidence and engineering effort

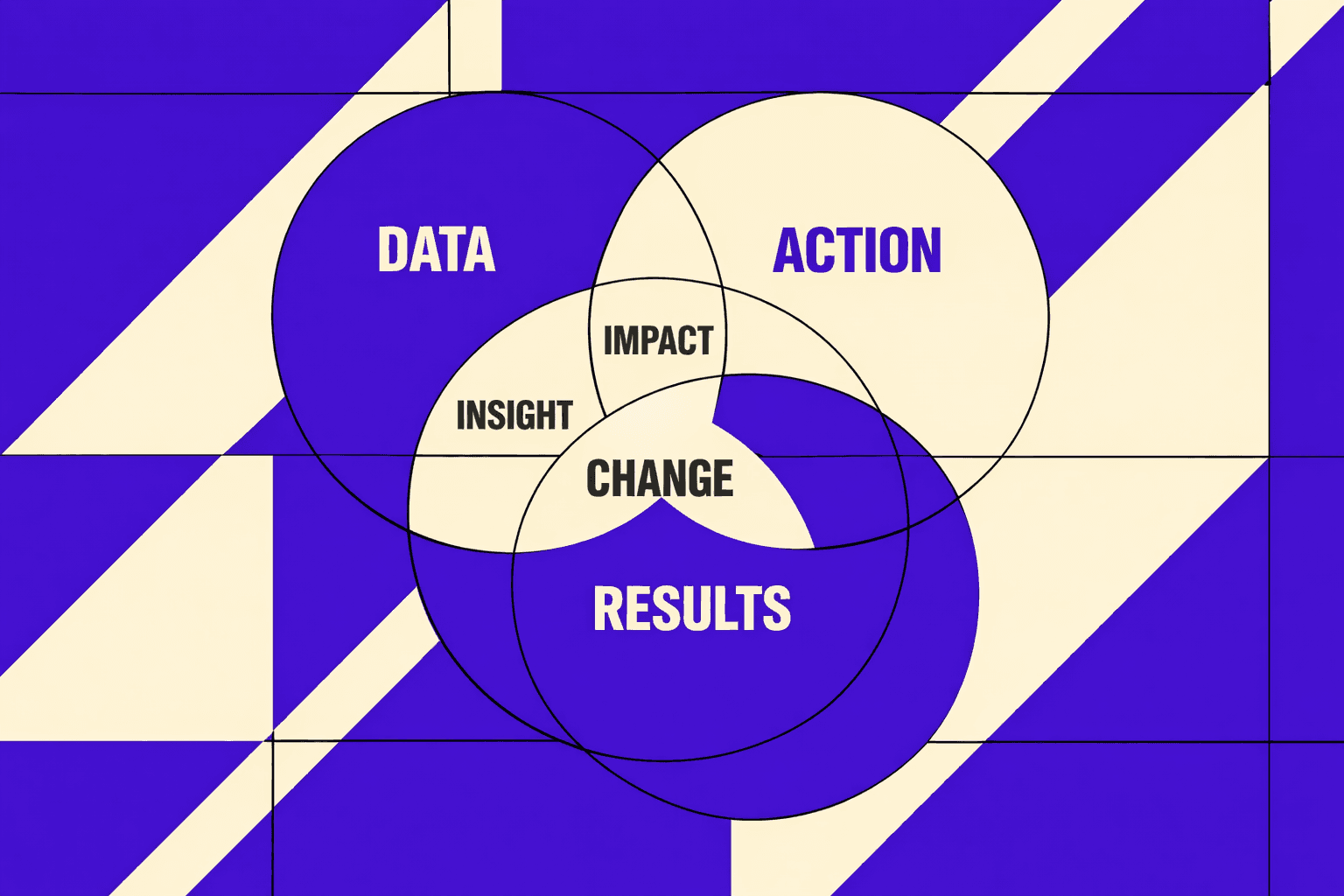

We score every issue using three inputs: impact, confidence, and engineering effort.

Impact comes first, and we define it in business terms.

Revenue pages first, then crawl efficiency, then long-tail scale.

Confidence is where most audits collapse.

If we cannot reproduce it across data sources, it drops fast.

One client cut false positives down to 3% over six months once we enforced cross-checks and verification rules, not “more rules.” According to AI Technical SEO Audits: The New Frontier in Site Optimization, that 3% level is achievable with an AI-assisted approach.

Engineering effort is not “easy vs hard.”

It is “what blocks a deploy.”

Template fixes beat URL-by-URL fixes every time.

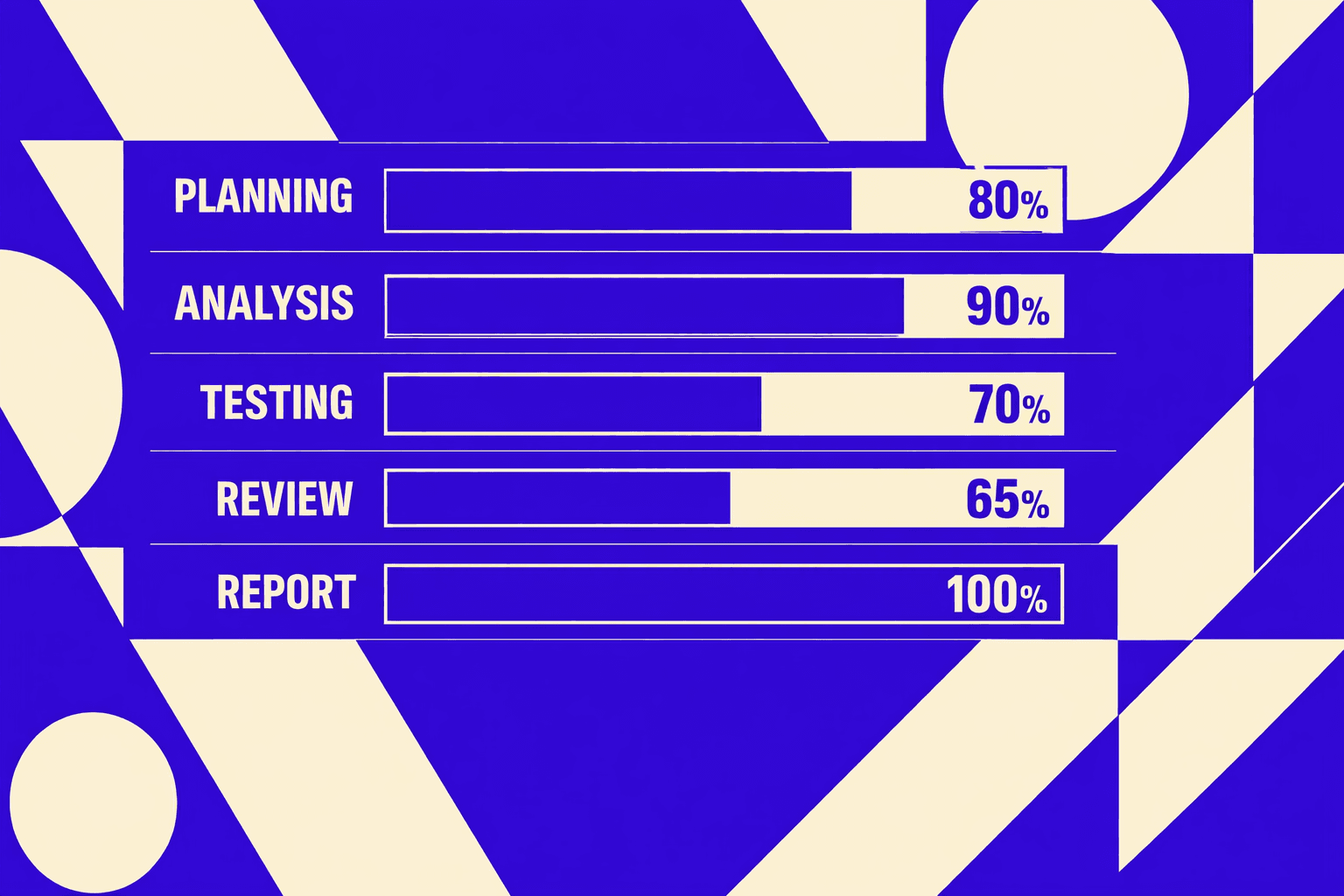

The workflow: Crawl render validate deploy verify

Our workflow stays boring on purpose.

Crawl, render, validate, deploy, verify.

If we cannot verify, we do not ship the ticket.

Crawl and render catch the gaps between HTML and what users see.

Validate means we test assumptions against logs and indexation signals.

Deploy means we attach ownership and acceptance criteria to code.

Verify means we re-crawl, check logs, and confirm the metric moved.

This is also where a good seo audit tool earns its place.

It should shorten the path to a clean deploy, not add reports.

If you want the broader system view, see AI SEO Audit Tools Drive Technical SEO Results for Modern Teams.

Where we use AI and where we refuse to

AI cannot replace manual SEO audits.

It replaces the busywork, not the judgment.

That difference matters more in seo for ai search.

We use AI for clustering, pattern detection, and drafting remediation notes.

It speeds up ai seo optimization when the site has repeating templates.

Research from AI Technical SEO Audits: The New Frontier in Site Optimization shows early adopters can see up to 25% gains when they shift from periodic fixes to continuous optimization.

We refuse to let AI own root-cause diagnosis.

We also refuse AI-written acceptance criteria without review.

Even with claims of 95% to 98% accuracy for technical checks, accuracy does not equal correctness in your stack. Data indicates top-end accuracy near 98% can happen in controlled checks (AI Technical SEO Audits: The New Frontier in Site Optimization).

Our stance is simple: AI drafts, humans decide.

That is how an audit becomes engineering work.

And that is how the second seo audit tool we build stays honest.

Evidence - What Changed After We Implemented It

Real outcomes - Faster fixes, fewer regressions, more measurable wins

Our best wins didn’t come from fixing everything. They came from fixing the constraint. The one that capped indexation, relevance, or internal authority flow.

I still remember the moment it clicked. We compared rendered HTML to source HTML on a key template. The crawler “saw” a page. Googlebot did too. Our internal links didn’t. The nav existed in React, not in the final DOM snapshot. That mismatch created silent dead-ends.

Once we fixed those constraints, the workflow changed. Engineering and content worked from one prioritized backlog. Each ticket had acceptance tests and a proof step. That’s where aseo audit toolearns its keep.

Examples - Technical fixes that unlocked traffic, not just health scores

We’ve repeatedly seen lift after three patterns get resolved.

First, rendering and indexation mismatches. We ship server-side output for critical links. We confirm in rendered DOM. Then we verify in logs.

Second, templated metadata duplication. Title tags and descriptions drift when templates multiply. That kills relevance at scale, especially in seo for ai search.

Third, internal link dead-ends on high-intent pages. We fix orphaned hubs. We route authority through real paths, not “related posts” widgets. This is why we wrote Why Most SEO Audit Tools Miss Internal Linking Problems.

Research from AI Technical SEO Audits: The New Frontier in Site Optimization shows AI can detect about70%of technical issues. That matters in a technical seo audit. It keeps us focused on patterns, not one-offs.

What we track to prove causality

We don’t claim wins from vibes. We prove them.

We validate impact with pre and post cohorts, tied to annotated deployments. We track query groups, not single keywords. We also watch log-based crawl patterns. That’s how we avoid mistaking seasonality for success.

AI Technical SEO Audits: The New Frontier in Site Optimization found one e-commerce case where important pages were crawled3xmore often after fixes. That’s the signal we want. It links cause to effect.

So what should you expect from an SEO audit tool in the first 30 days? A shorter backlog, fewer repeat bugs, and clearer ownership. You should also expect measurable crawl and indexation shifts. If you don’t, your tool is reporting, not driving ai seo optimization.

Data indicates teams can spot issues far faster with AI - up to10xin some workflows (AI Technical SEO Audits: The New Frontier in Site Optimization). Speed only counts when it ships. That’s why we designed the system, not the report.

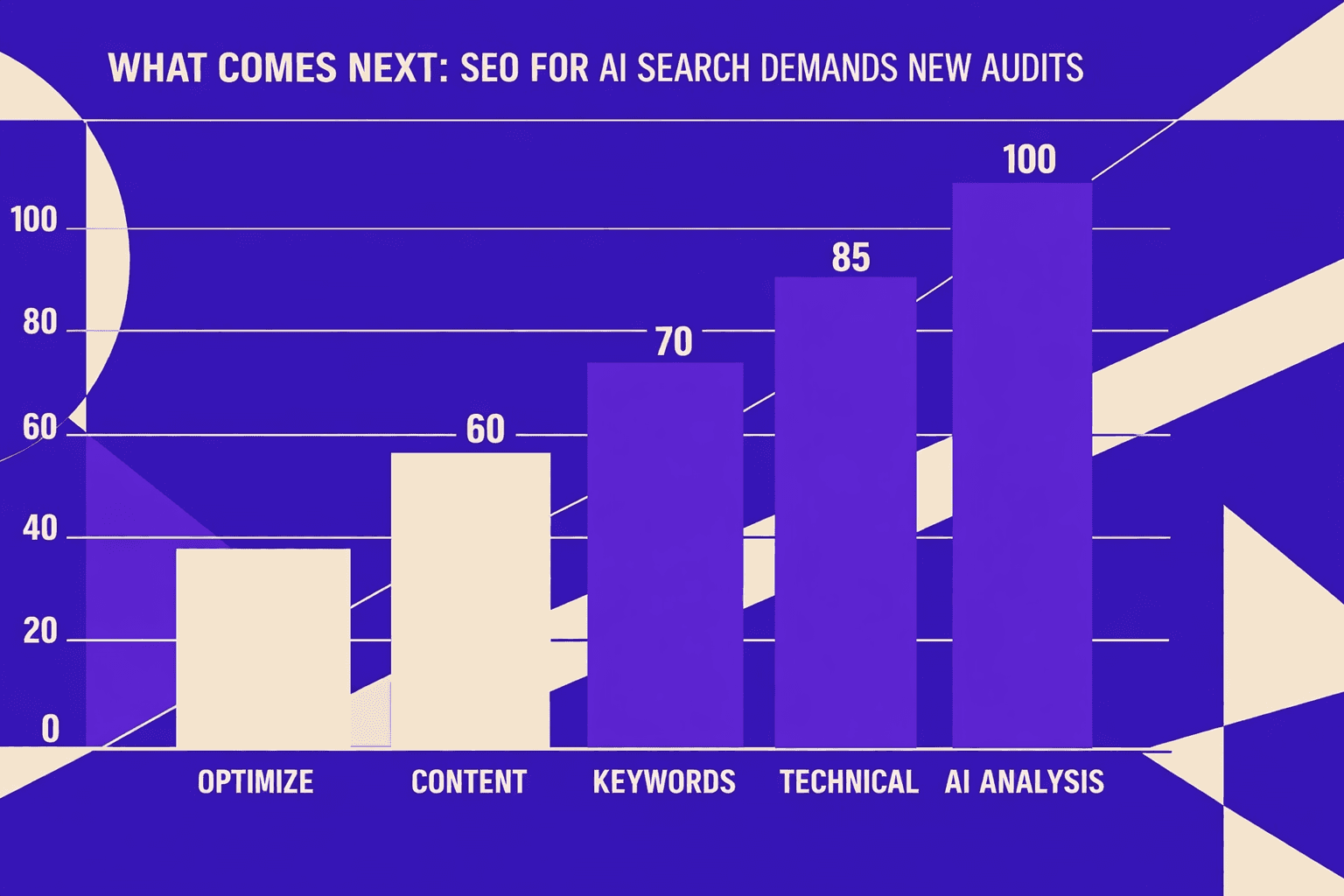

What Comes Next: SEO For AI Search Demands New Audits

That shift matters more now because SEO for AI search rewards sites that are easy to parse, internally consistent, and semantically structured. The winning sites will not be the ones that publish the most pages. They’ll be the ones whose templates produce clean HTML, stable entities, predictable internal linking, and content that matches its own claims across the site. If an AI system can’t reconcile your navigation labels, headings, schema, canonicals, and on-page language, it won’t “average them out.” It will skip you.

This is why I expect audits to stop being quarterly events. Quarterly audits assume the site stays still. Modern stacks don’t. Releases change templates, routing, rendering, and metadata every week. Content ops adds new sections, updates old pages, and spins up new taxonomies. SERPs move faster than your PDF can age. The only sane response is continuous monitoring tied to releases, templates, and the people who own them.

Skeptics are right about one thing: not every team needs a bespoke system. If you ship rarely, have a small site, and your templates barely change, a lightweight workflow can work. But if we’re shipping weekly and competing in volatile SERPs, static reports turn into liabilities. They give you confidence with no control. They tell you what broke after it already cost you crawl, relevance, or revenue.

Our recommendation is simple and operational: treat your audit like a product. Define SLAs and owners. Put automated checks in CI so failures block releases, not rankings. Create a measurable definition of “search-ready” for every release, every template, and every content launch. When audits become part of engineering - not a separate ritual - SEO becomes predictable again.

If your team is tired of chasing findings that never land, it’s time to build an audit system that ships with your code. Ready to see what that looks like in your stack? Learn More and let’s discuss your goals.