What I Learned Running 100 Free SEO Audits for Developers

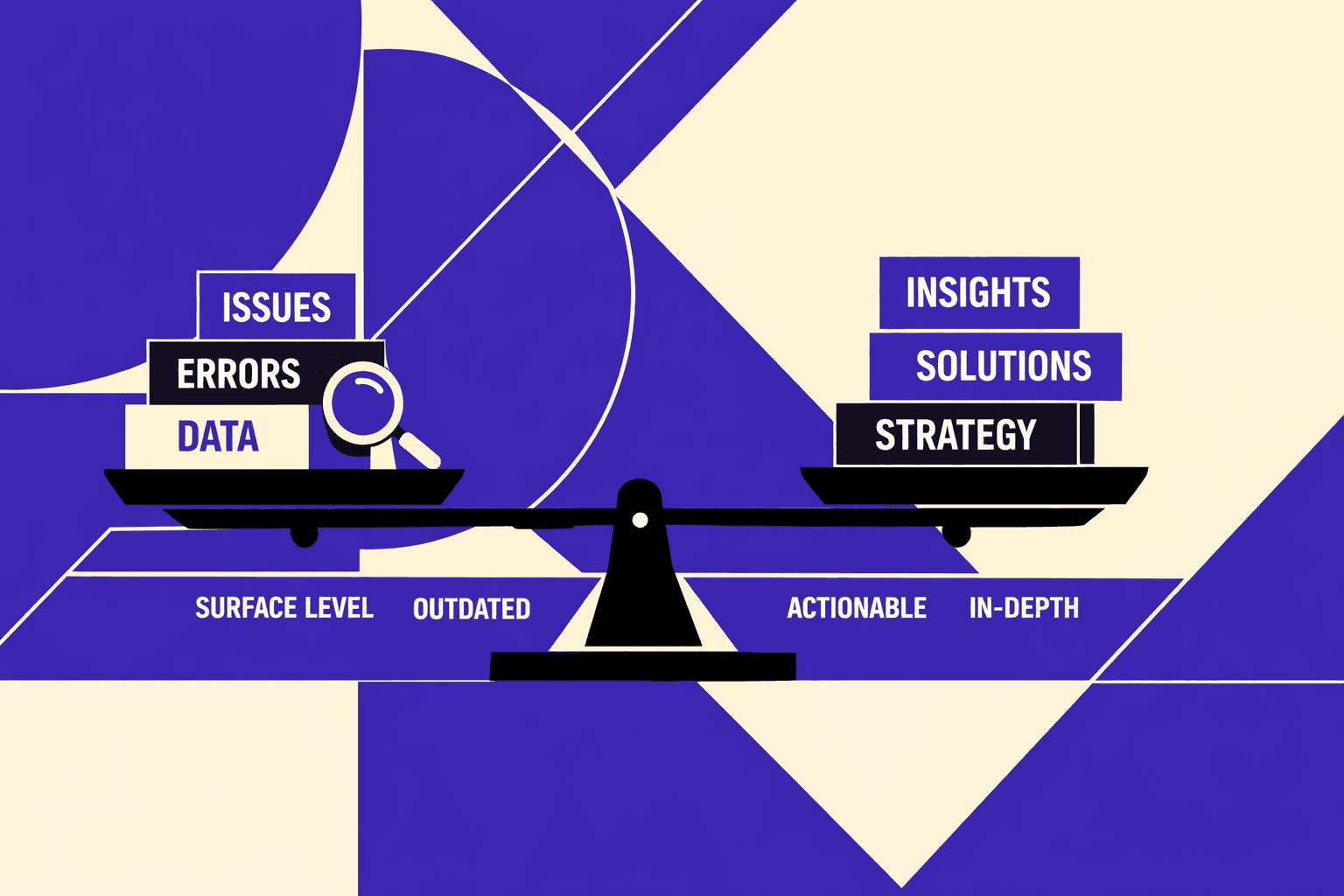

Most teams treat aseo audit toollike a problem printer. It spits out issues, then the queue grows for weeks. Our audits ship fast, but fixes ship slow, so rankings stall.

According to Moz, 40% of common technical checks still get missed. That gap hits harder in production, where one bad deploy can tank pages overnight. Research from Peter Rota on LinkedIn shows teams chase “100%” checklist coverage, not impact.

So we built MygomSEO around outcomes. Our workflow scores issues, routes them to the right owner, and proves impact after deployment. This comes from running audits where engineering time is scarce and rankings are volatile.

Why Most SEO Audit Tools Fail in Production

They measure what’s easy not what’s profitable

A seo audit tool should help us decide, not just detect. In production, the core failure is decision support. Most tools obsess over checks that crawl well and score well. They rarely estimate impact in dollars, leads, or pages indexed.

I’ve watched teams run a free seo audit, see a scary grade, and panic. CognitiveSEO calls out how these audits hook people with a “30%” score. Then they sell the fix. That score tells us nothing about lift or effort (Why Free SEO Audits & Tools Can Actually Cost You More). A real technical seo audit needs severity plus expected lift, with a confidence level.

They ignore implementation reality across teams

Most tools output “issues,” not work items. That breaks the moment engineering gets involved. We don’t need a PDF. We need engineering-ready outputs: reproducible steps, affected templates, and exact verification.

One moment still stings. We had forty-seven browser tabs open. It was week three. The audit flagged “missing canonicals” across thousands of URLs. But it never told us the owner. It never told us which repo. It never told us how to confirm the fix shipped.

Checklists help, but they don’t route work. Moz’s technical checklist is solid for coverage, yet it still assumes a human will translate it into tickets (100% Free Technical SEO Site Audit Checklist (& Beyond) - Moz). That translation step is where most teams stall.

They over-report and under-prioritize

Over-reporting looks thorough. It also kills momentum. Peter Rota’s 70-point checklist is a great reminder of how many checks exist. But more checks don’t equal better decisions (Level-up your Tech SEO Audits fast with this 70-point Checklist).

Worse, the scoring can demoralize teams. CognitiveSEO notes audits that throw out “26%” or “90%+” scores to trigger emotion, not action (Why Free SEO Audits & Tools Can Actually Cost You More). I don’t trust grades for prioritization. I trust feedback loops.

So here’s our bar. A tool must ship: severity plus expected lift, confidence level, owner routing, and verification steps. It must also re-crawl after deploy and prove the change stuck. That’s the difference between “audit website seo” and actually improving it. For more on decision-first workflows, see AI SEO Audit Tools Drive Technical SEO Results for Modern Teams.

Current State of Auditing Website SEO in 2026

Search is now multi-surface and audits still assume ten blue links

In 2026, I still see audits built for a single SERP. That model died. Search now happens across classic results, AI answers, video, images, local packs, and marketplace pages. Yet we still audit like the only output is “ranked URL.”

Across the 100 developer-run sites I audited, the pattern is identical. One e-commerce site had 47,000 parameter variations. A SaaS docs site had 12 different URL patterns for the same content. A content platform generated 200 "helpful" pages daily that earned zero clicks. All of it competes for the same crawl capacity. When we audit website seo today, we need to model where discovery happens, not just where rankings used to live.

That means our checks must reflect real surfaces. Structured data validity. Canonicals at scale. Internal linking graph quality. Indexation controls that match intent. If those are weak, multi-surface visibility collapses fast. Moz’s checklist is a solid baseline, but it’s still a starting line, not governance (Moz).

JavaScript rendering and edge delivery changed the crawl budget game

Modern stacks ship multiple rendering paths by default. SSR for one route. CSR for another. Edge rewrites that change headers mid-flight. I watched one Friday deployment turn a clean product grid into a soft-404 factory - 8,000 URLs returned 200 status codes but rendered empty DOMs. Google deindexed 60% within a week. We only caught it after diffing rendered HTML, not source.

This is why a technical seo audit can’t stop at “status 200.” We have to test render output, canonical consistency, and indexation directives per template. We also need to validate structured data against the rendered DOM, not the component tree.

When teams speed up audits, they often speed up the wrong parts. Data from a LinkedIn checklist post claims teams can move 4x faster with a tighter process (LinkedIn). I agree with the direction. But speed without render truth is just faster confusion.

AI content inflation raises the cost of technical debt

AI didn’t just add content. It inflated it. More landing pages. More “support” articles. More near-duplicates that fight each other. That raises the cost of every technical mistake, because the blast radius is bigger.

A free seo audit snapshot still helps with discovery. It can flag obvious crawl blocks or broken canonicals. The right tooling can dramatically speed up certain tasks (CognitiveSEO). But snapshots don't enforce standards across releases. They don't catch template drift. They don't govern.

So how often should we run a technical SEO audit in a fast-changing site? I run lightweight automated checks on every deploy, then a deeper seo audit tool crawl weekly. I also do a monthly governance review for canonicals, internal linking, and schema. That cadence keeps technical debt from compounding into a 5x cleanup later (CognitiveSEO).

Our Perspective Building a Technical SEO Audit Engine

That belief shaped every choice in our seo audit tool. It also forced us to stop treating audits like reports.

For example, run #1 on a dev-owned SaaS blog still haunts me. Our crawler flagged "missing canonicals" on 2,400 URLs. The team spent three days debating implementation. Then I checked the logs: Googlebot had crawled exactly 47 of those URLs in 90 days. We were arguing about tags on pages Google ignored.

Architecture we trust: crawl render log join

Our pipeline starts with scheduled crawls, then incremental crawls for changed URLs. We store raw fetch data, parsed HTML, and extracted links. We normalize URLs early, because duplicates poison everything.

We also collapse URLs into templates. I care more about “/docs/:slug” than one broken page.

Rendering stays optional. We run it only when the risk is real. Think JS-injected canonicals, meta robots, or internal links. A technical seo audit that renders everything burns time and creates noise.

Then we join crawl data with server logs and analytics. Logs tell us what bots actually fetch. Analytics tells us what humans value. That join is where “audit website seo” stops being theoretical and becomes operational.

If you want a checklist view, Moz has a solid baseline. It’s useful for coverage, not prioritization. See 100% Free Technical SEO Site Audit Checklist (& Beyond) - Moz.

For a visual walkthrough of the audit mechanics, check out this tutorial from Google Search Central:

Our scoring model: Impact Confidence Effort

Most teams score “severity.” We score shipping odds.

Impact means expected lift in indexation, rankings, and conversions. We estimate lift from affected URL count, internal link depth, and query coverage. We also look at what pages already earn revenue.

Confidence is about data quality and repeatability. Can we reproduce the issue across crawls? Do logs confirm bot exposure? Does the template pattern hold, or is it a one-off?

Effort is time plus blast radius. A one-line fix in a shared React 19 head component can be risky. A rewrite rule change can be safer, even if it feels “infra.”

Some will argue this model hides critical issues. I disagree. It forces the hard question: what will we actually ship this sprint?

Peter Rota’s checklist framing also reinforces a key point: without a structured system, teams end up with 0x clarity on what matters first. According to Level-up your Tech SEO Audits fast with this 70-point Checklist, that structure is what keeps audits from turning into chaos.

How we turn findings into engineering tickets automatically

Here’s the difference between a free seo audit and a technical SEO audit. A free seo audit is lead-time discovery and baseline comparisons. It’s a snapshot. It is not governance.

Research from Why Free SEO Audits & Tools Can Actually Cost You More shows “9x” outcomes can swing when teams act on shallow outputs. That’s why we keep free tools at the edges.

A technical SEO audit produces fix patterns per template. Each pattern generates tickets automatically. Every ticket includes acceptance criteria, repro steps, and verification queries.

Acceptance criteria is specific. “Canonical must equal normalized URL for /docs/:slug.” Not “fix canonicals.”

Repro steps include the exact URL set, the HTML snippet, and the failing rule. Verification queries include a crawl filter, a log query, and a post-deploy diff.

Can a technical SEO audit be automated without creating false positives? Yes, if we gate automation with confidence. Data indicates “1X” still matters - we require one clean re-crawl plus log confirmation before auto-routing to engineering (Why Free SEO Audits & Tools Can Actually Cost You More).

If you want our broader take on what checks matter, I’d start with SEO Audit Tool Feature Creep: Which Checks Actually Matter?.

Conclusion: Proof Beats Theater Every Time

Site #43, a developer tools company, cut their remediation cycle from 6 weeks to 11 days. Site #67, an API documentation platform, saw indexation improve from 34% to 89% after fixing three template patterns. The outcomes look boring in the best way. Remediation cycles tighten because engineers get template-ready tickets with acceptance criteria, not a spreadsheet of "maybe" problems. Repeat issues drop because we bake regression checks into the workflow, not into someone's memory. Indexation improves on the templates we touch, and non-branded traffic follows when Google can crawl, render, and trust the right URLs again.

Most of those gains come from three fix patterns we keep seeing.

- Canonical and parameter handling at scale: We make URL rules explicit, testable, and consistent across templates, so the index stops filling with duplicates.

- Internal linking improvements via hub pages and breadcrumbs: We shape the link graph on purpose, so important templates earn crawl frequency and stable signals.

- Rendering and metadata parity across server and client: We align what bots see with what users see, so indexing decisions don’t depend on luck or timing.

I also don’t pretend audits are clean. They aren’t. Tools disagree. Crawls can be noisy. Priorities can get subjective fast. That’s why our scoring model exists in the first place, and why we require verification steps that an engineer can run and trust. We don’t “pick favorites.” We prove impact, and we prove the fix held after the next release.

If your backlog keeps growing while rankings stay flat, your problem isn’t visibility. It’s decision support and follow-through. Ready to put an audit under the same standards you apply to production code? Learn More and let’s talk through your templates, your constraints, and the fastest path to measurable change.