Why Your Technical SEO Audit Should Start With HTTPS (Not Content)

Most teams buy anseo audit tooland get a prettier checklist. Then they ship a bigger PDF. That’s backwards, because audits should ship fixes.

We see teams drowning in dashboards while Core Web Vitals, indexation, and JavaScript rendering issues compound in production. According to BruceClay,53%of site traffic comes from organic search, yet most audits still stop at reporting. Data from BruceClay shows44%of shoppers start their journey on a search engine.

At MygomSEO, we built an audit system that turns findings into tickets engineers can merge. We’ll show our technical tradeoffs, how we implemented it, and how we measure outcomes. This comes from technical SEO work inside real release cycles where developers own the deploy.

Why Most SEO Audit Tools Fail Technical SEO Audits

They score pages instead of explaining root cause

I still remember the audit that broke my patience. We had 47 tabs open, a crawl report, and a sea of “warnings.” The tool told us “duplicate titles” across hundreds of pages. It never told us the real cause: a single shared template string in a header component.

That gap is why “scores” fail developers. Scores do not tell you which route, which template, or which conditional rendered the bad tag. They do not tell you whether Googlebot saw the same HTML. Neil Patel’s audit guide lists many checks, but the hard part is moving from check to fix. That handoff is where most tools collapse. Technical SEO Audit: Easy Guide to a Comprehensive Audit

They ignore developer constraints and release reality

Most reports pretend every fix is a same-day tweak. Our reality is tickets, owners, deploy windows, and risk. A finding only matters if it ships. That means every issue needs a concrete recommendation, a clear owner, and a verification step.

We also prioritize a small set of high-leverage failure modes. Crawl traps. Indexation conflicts. Broken canonicals. Template-level meta mistakes. Internal linking decay. Performance regressions that appear after a release. According to BruceClay, one case study tied technical SEO work to a 166% jump in website performance outcomes, but only because the work was actionable.

If your tool cannot export raw, testable evidence, your team will guess. That is why we obsess over reproducible outputs like logs, rendered HTML, and URL-level diffs, and why I keep pointing teams to SEO Audit Data Export: Why Most Tools Hide Your Results.

They treat JavaScript and rendering as edge cases

In 2026, JavaScript-heavy sites are not “advanced.” They are normal. Yet many tools still crawl like it is 2012. They miss SSR drift, hydration gaps, and robots rules that only appear after rendering. Even HTTPS adoption sits at 85.4% of usage in one industry snapshot, which means the remaining long tail still ships mixed setups that break canonicalization and redirects in subtle ways. Research from BruceClay shows that 85.4% figure.

For a visual walkthrough of what a real audit flow looks like, check out this tutorial from Google Search Central:

XYOUTUBEX0XYOUTUBEX

So what is the best seo audit tool for real technical issues? The best seo audit tool is the one that produces developer-grade evidence: a failing URL, the rendered HTML, the owning code path, and a CI-verifiable test. Anything else is a dashboard.

And when redirects show up, they need real math, not vibes. NAV43 found that each redirect can cost about 15% of link equity, so “just add another hop” is not a harmless suggestion.

Current State of Technical SEO Audit Automation

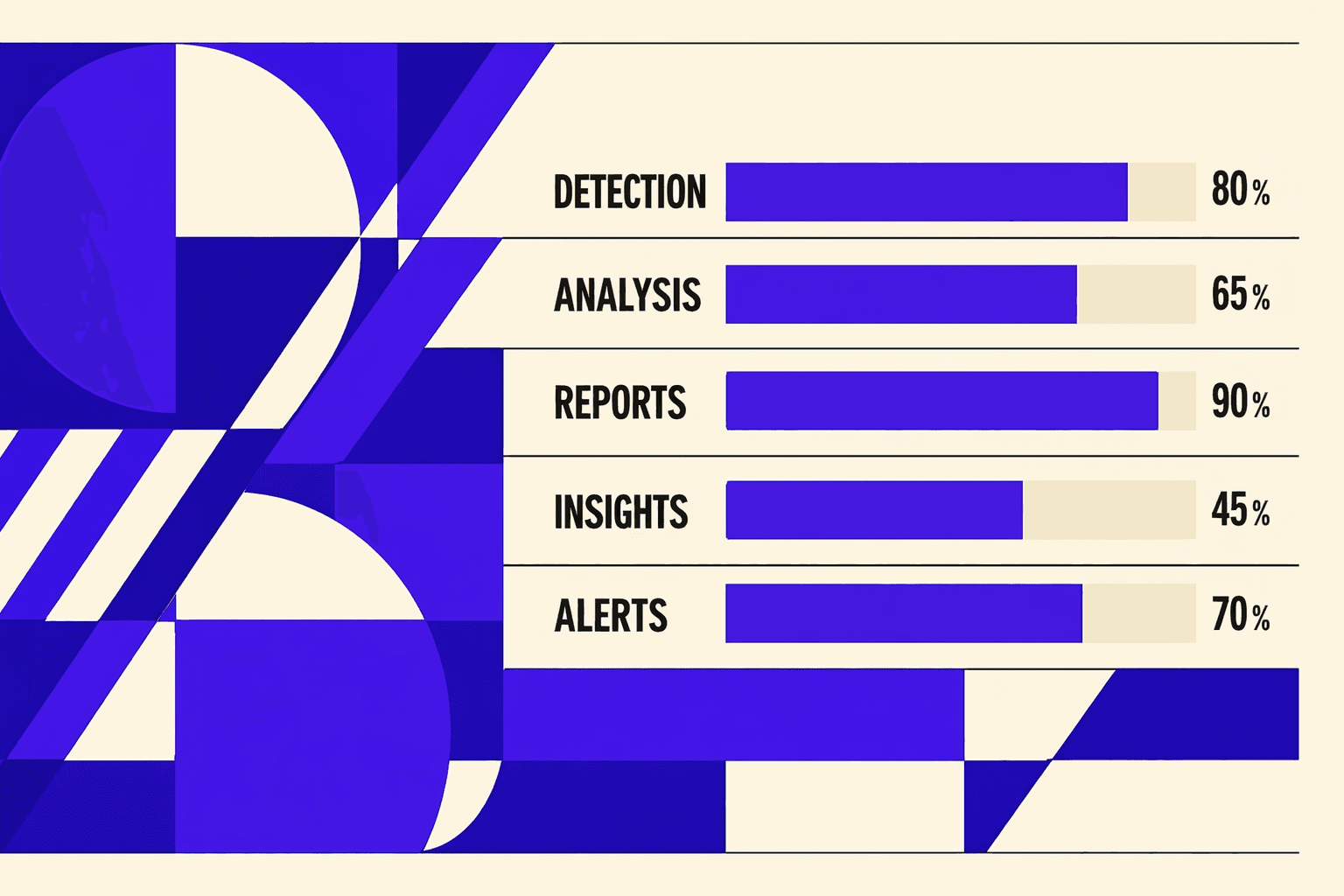

What automation does well today

Automation finally wins at breadth. It crawls fast, clusters patterns, and flags obvious breakage. That matters when mobile traffic dominates. Technical SEO Audit: Easy Guide to a Comprehensive Audit found almost 59% of global web traffic is mobile.

In a modern technical seo audit, I want this kind of sweep first. I want to know what is broken across templates. I want to see where issues repeat. The best seo audit tool workflows today do that in minutes, not days.

Where automation still lies to you

Automation still lies on “truth.” It sees HTML, then assumes Google sees the same thing. That assumption fails on real stacks.

For example, we audited an SPA with edge rendering. Run #1 looked clean. Then a personalization flag flipped. Canonicals changed, headings shifted, and internal links disappeared. Same URL. Different page. The crawler never noticed.

Modern stacks break “one URL equals one page.” SPAs, edge rendering, A/B tests, and per-user personalization all rewrite reality. Rendering parity becomes a moving target. Intent mapping breaks when templates share the same URL shape. Ownership gets muddy when marketing controls copy, but engineers own routing.

Even protocols tell a similar story. Research from 5 Technical SEO Best Practices You Can't Ignore - BruceClay notes HTTP/3 adoption is still around 3%. The web ships unevenly. Tool assumptions age fast.

Why seo checker free tools hit a ceiling

A seo checker free tool is worth using for technical SEO, but only for triage. I use them to get a quick signal. Then I switch to a workflow that matches production.

Free checkers rarely handle environment parity. They do not validate behind auth. They do not crawl staging safely. They do not prevent regressions after a deploy. They also struggle to prove what changed, and why.

That is why our audits moved to continuous monitoring. The shift is bigger than tooling. We now tie checks to deployments and log data, not quarterly snapshots. If you cannot diff findings across releases, you cannot ship with confidence.

If you want to go deeper on portability, read why most tools hide your results. According to 5 Technical SEO Best Practices You Can't Ignore - BruceClay, 25% of pages still have basic HTTPS-related gaps. That is the cost of audits that do not run like engineering.

Our Perspective Building an SEO Audit Tool for Developers

Thesis in practice: we designed for engineering adoption

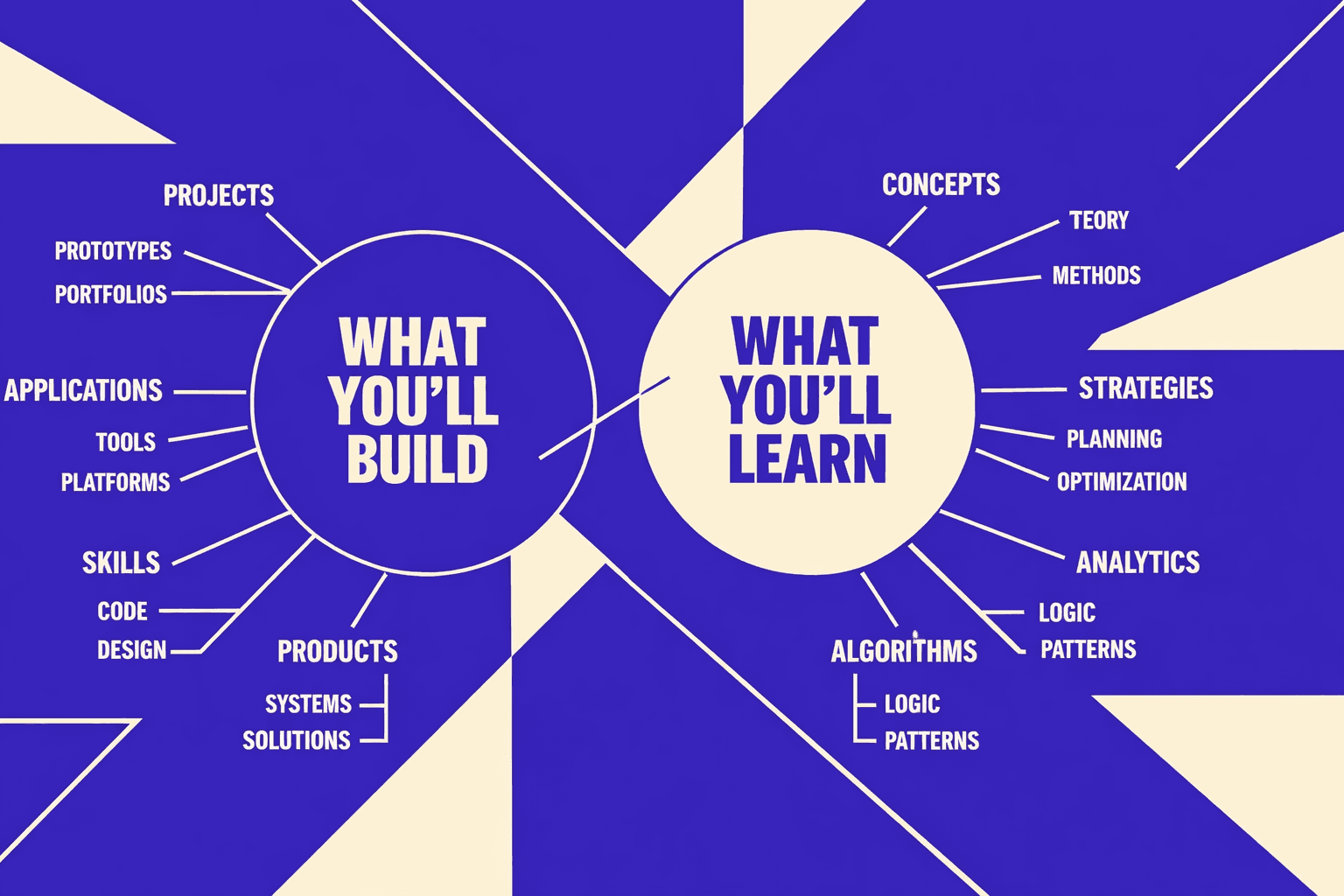

We built our seo audit tool around one principle: developers fix what they can verify, reproduce, and ship safely.

So we stopped writing “SEO findings.” We started writing test cases.

I still remember the moment it clicked.

We had an audit spreadsheet open, a staging URL, and a Slack thread on fire.

The dev lead asked, “What’s the exact request, and what should I see?”

That question became our product spec for seo for developers.

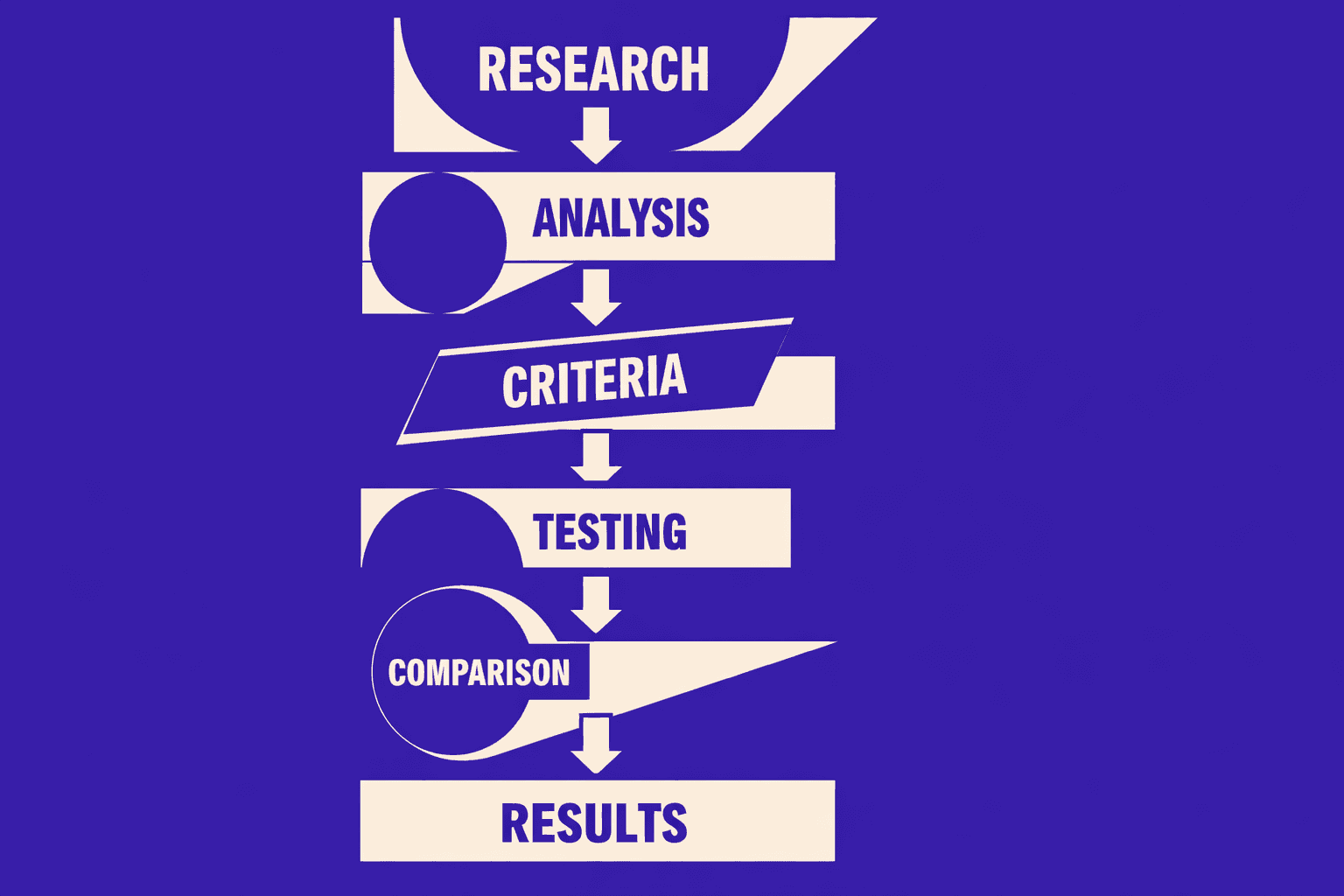

How we built it: crawling, rendering, and rule evaluation

Most platforms blur three steps, then argue about the output.

We separated them on purpose: discovery, truth, and severity.

Discovery is the crawl. It maps the URL universe fast.

Truth is render parity, not “view source.”

That matters because Google crawls from mobile now, and that model keeps tightening. According to Technical SEO Audit: Easy Guide to a Comprehensive Audit, 100% of crawling comes through mobile.

Then we run deterministic rule evaluation.

Each rule has inputs, a pass or fail, and proof artifacts.

No fuzzy scoring. No “maybe it’s fine.”

This is also where we keep HTTPS boring and strict.

If the crawl finds HTTP, the render must confirm redirects.

If redirects loop, we fail the rule and show the chain.

How we prioritize: impact scoring tied to templates and traffic

Severity can’t live in a vacuum.

We tie impact to what the business actually ships.

We map URLs to templates and routes.

Then we attach ownership: code owners, repos, and deploy artifacts.

If a canonical bug affects a product template, it hits harder. That aligns with the failure modes most technical SEO guides still flag - canonicals, crawlability, and indexation controls (BruceClay).

We also weight by observed demand signals.

Not “sitewide.” Not “best practice.” Real templates, real traffic paths.

That’s how you get engineers to act without a meeting.

How we operationalize: CI gates, tickets, and rechecks

We treat audits like regression control, not a quarterly event.

Every critical rule can run on staging before release.

When a rule fails, we open a ticket where work happens.

The ticket includes the exact URL, template, and reproduction steps.

Then we recheck after deploy and record “fixed vs reintroduced.”

That history matters more than the first failure.

It tells us if a team learned, or if the system stayed fragile.

If you want the bigger picture on making results portable, our thinking connects with SEO Audit Data Export: Why Most Tools Hide Your Results.

For a visual walkthrough of the audit mindset, check out this tutorial from The Conversion Clinic - by JRR Marketing:

XYOUTUBEX1XYOUTUBEX

Counterarguments we take seriously

Can an seo audit tool replace a technical seo audit?

No - not fully, and we don’t pretend otherwise.

A strong technical seo audit includes judgment.

It handles politics, roadmap tradeoffs, and “why now” timing.

It also catches edge cases that rules miss, like odd faceting logic.

We built custom tooling anyway for a reason.

Our clients needed speed, consistency, and integration beyond generic outputs.

And when we need external benchmarks, we still cross-check against common mistake patterns and remediation playbooks (NAV43).

We don’t want more audits.

We want fewer repeat bugs, and clean deploys that stay clean.

What Our SEO Audit Tool Changed for Clients and Whats Next

When we put that yardstick on real client stacks, the wins stopped being abstract. We saw fewer indexation surprises because we caught conflicts early and verified the fix where it matters - in the crawlable, renderable output Google actually sees. We saw performance stabilize after releases because checks ran against staging and production, not against hopeful assumptions. And prioritization got sharper because findings tied directly to revenue templates - category pages, product detail pages, and the internal links that feed them - not to whatever looked scary in a dashboard.

This is why I think the next generation ofseo audit toolplatforms won’t feel like “SEO tools” at all. They’ll look likerelease safety systems. The winners will fuse three streams into one truth layer: crawl data (what bots can reach), render truth (what users and Google get after JS), and release metadata (what changed, when, and who owns it). When those connect, we stop reacting to regressions and start blocking them before they ship. That’s where technical SEO is headed in 2026 - prevention, not detection.

Some will argue this is overkill. “Just run a crawler and fix the biggest issues.” That misses the point. Modern sites don’t fail because teams don’t see issues. They fail because fixes don’t land cleanly, don’t get verified, or get reintroduced on the next deploy.

Our recommendation is blunt:stop buying audits as deliverables. Build an audit loop. Route findings to developers in the systems they already use, tie each issue to a deployable owner, and require post-release verification like any other production control. That’s how a technical seo audit becomes an engineering habit, not a quarterly fire drill.

Ready to build that loop into your release cycle? Learn More and reach out to learn more.